Noticias

Meet Kevin Weil, the Man Turning Sam Altman’s Visions Into Reality

Published

1 año agoon

Kevin Weil showed no sign of the heat when he took to the stage at the Marriott Marquis in downtown San Francisco on an unseasonably sweaty early October morning.

Trim and tan, outfitted in the de rigueur Silicon Valley uniform of a slim-fit gray tee, stonewashed blue jeans, and an Apple Watch Ultra, the chief product officer of OpenAI talked easily about the ambitious artificial intelligence-powered future his red-hot employer was building.

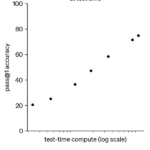

“You could imagine a world where you ask it a hard question about how you cure some particular form of cancer, and you let it think for five hours, five days, five months,” Weil prophesied in response to a question about the “reasoning capabilities” of AI.

But neither he nor his onstage interlocutor, Anyscale cofounder Robert Nishihara, ever acknowledged the elephant in the room: Weil wasn’t supposed to be there.

The event, the AI infrastructure conference Ray Summit, had originally booked OpenAI’s chief technology officer, Mira Murati, to speak. Just days before, the high-profile executive had abruptly quit, prompting the last-minute swap. Murati’s exit added to a long list of recent departures from OpenAI, one of the world’s most valuable and hyped startups, even as it closed a historic $6.6 billion funding round on the day Weil spoke.

If Sam Altman is the starry-eyed visionary of OpenAI, Weil is its executor. He leads a product team that turns blue-sky research into products and services it can sell, putting him at the center of a philosophical rift that has caused spectacular upheaval at the company, which was recently valued at $157 billion.

In two years, OpenAI went from a nonprofit lab nominally working to develop digital intelligence for the public good to a world-famous startup that puts out shiny new products and models every few months. The company is now attempting to become a for-profit operation to lure would-be backers to write bigger checks, which it needs to scale its business. Altman recently announced that the company’s flagship product, ChatGPT, now has 300 million weekly users, triple the number it had a year ago.

Along with this astronomical growth, OpenAI has succumbed to a brain drain: Its chief research officer, head of AGI readiness, co-lead of its video-generation model Sora, and the list goes on. While this has sparked alarm bells in some corners of the tech industry, it has also elevated the profile of the senior leaders who have remained. That includes Weil, a relative OpenAI newcomer who joined in June and rapidly became one of its most notable ambassadors.

At a point when employee voices of dissent were growing louder, 41-year-old Weil arrived as a steady-handed product guru with a Midas touch. He was a longtime Twitter insider who created products that made the social media company money during a revolving door of chief executives.

At Instagram, he helped kneecap Snapchat’s growth with competitive product releases such as Stories and live video. (Not everything he touched turned to gold, though. Weil also led Facebook’s headlong charge into financial services as a cofounder of Libra, its ill-fated stablecoin.)

Weil declined to be interviewed for this article, which is based on conversations with five former senior colleagues, four of whom spoke on the record, and his past public interviews.

Twitter and Facebook were no strangers to chaos and scandal, but even their most challenging times are rivaled by OpenAI’s workplace turmoil. There was a failed coup last year, bitter feuds among some workers, and an ongoing existential arms race to build “digital gods.”

Weil’s peers are certain he’s the man to promote harmony. He’s spent the better part of 15 years cranking out products that mostly delighted users and made money. He also has something his new employer desperately needs: The ability to pursue the company’s best interests and balance human emotions at the same time.

Related stories

Kevin Weil isn’t a household name. But for those in the know in Silicon Valley, he’s something better: “He’s the get-shit-done guy,” said James Everingham, who worked with Weil at Instagram and Facebook.

Sarah Friar, OpenAI’s new head of finance, has long admired Weil’s product chops. Former Twitter boss Adam Bain called Weil “Twitter’s secret weapon” for driving ad revenue. Everingham also described Weil as a workhorse who never shrank from a deadline.

“He brings that stamina, that dogged focus on the outcome,” said April Underwood, a venture capitalist who worked closely with Weil on Twitter’s ad products.

Weil’s relentless work ethic and people skills are recurring themes among former colleagues. At Facebook, two colleagues said he would often badge into the office first and fire off messages late into the night.

One colleague from Planet, a satellite imagery company where Weil worked as president until May of 2024, recalled how he started each week by posting in Slack the top three things on his mind. According to Everingham, Weil’s a rare breed of product manager who codes almost as well as he writes memos. That endeared Weil to the engineers he needed to build products.

Weil shrugs off the pressures of work with long runs and Diet Mountain Dew. Every birthday, he runs his age in miles and, in June, marked his 41st year with a 41-mile jaunt near his home in the posh California suburb of Portola Valley, according to a public Instagram post. Weil lives with his venture capitalist wife, Elizabeth, and three children.

“He is a little bit superhuman in just the sheer amount that he works and works out,” said Ashley Johnson, chief financial officer and now president of Planet.

Brian Ach/Getty Images for TechCrunch

Weil set aside a Stanford doctorate in theoretical particle physics to cave out a path in tech, according to a 2017 speech he gave at his alma mater. In 2009, Weil landed at Twitter as a data scientist. At the time, Twitter had little revenue, never mind profit, to show for its many millions of users. That left investors wondering how Twitter would translate its popularity into money.

When Twitter began developing ads a year later, Weil stepped up to lead it. Katie Jacobs Stanton, a venture capitalist who overlapped with Weil at Twitter, said employees debated how to show ads in a way that didn’t degrade the user experience, pitting engineers against marketers. Weil threaded the needle. Under his oversight, Twitter launched ads that looked like tweets inside the feed. AdAge reported in 2011 that hundreds of brands had embraced the format, helping to establish the cash flow that made Twitter’s IPO possible in 2013.

Then, in early 2016, Instagram cofounder Kevin Systrom asked Weil to dinner as Snapchat was nipping at the Facebook-owned photo app’s heels.

Instagram needed to get users, especially teens, to post more; Systrom wanted a pinch-hitter to get new features designed and added to the app and into the hands of Instagram users. Weil told CNBC in 2017 that he had already resigned from Twitter with plans to train for a 50-mile ultramarathon snaking the American River in California’s Central Valley. He took the Instagram job and, a month later, finished fifth in the race.

The product leader wasted no time in the photo-sharing battle. In just a few months, Instagram rolled out a feature similar to Snapchat’s disappearing photos and videos, then added popular face filters and also introduced a feed-ranking algorithm to highlight more relevant content. According to Instagram, its user base doubled within two years of Weil’s arrival, reaching 1 billion monthly users by 2018; Snapchat’s earnings statements during the same period indicated that its user growth had flatlined.

Everingham, his former colleague, recounted how Weil identified Stories as Instagram’s killer feature and assembled a nimble team of engineers under the CEO’s supervision to build it.

Related stories

“He had this clarity of thinking I haven’t seen in anyone else,” said Everingham, now an engineering leader of developer infrastructure at Meta.

Weil’s tenure at Instagram was defined by a controversial strategy: borrowing liberally from the competition. The features that became Instagram staples had their genesis in a rival’s playbook, which neither Systrom nor Weil denied in interviews with Vox and TechCrunch over the years.

This strategy is particularly poignant as Weil steps into his new role at OpenAI, a company navigating a thicket of copyright lawsuits. New outlets, authors, and celebrities have sued OpenAI over using their work to train its large language models.

That’s far from the only struggle the product chief faces. He’s positioned as one of Altman’s top lieutenants at a time when OpenAI’s famed brain trust is leaving in droves, often to start competitor companies that may threaten OpenAI’s early dominance in the space.

Former OpenAI employees and siblings Dario and Daniela Amodei founded Anthropic, one of OpenAI’s most notable competitors, in 2021. Former chief scientist Ilya Sutskever has raised $1 billion for his new venture, Safe Superintelligence. Ex-researcher Aravind Srinivas is working on an AI-powered search engine, Perplexity. In early December, Murati, the former CTO, told Wired she was “figuring out” what her new venture would look like, though it’s unclear if her startup will directly compete with OpenAI.

Patrick T. Fallon/Getty Images

Another threat comes from open-source AI models championed by the likes of Meta. If these free-to-use systems prove good enough for most users, it will make it far harder for OpenAI to effectively monetize its AI models and, ultimately, turn a profit.

Because with over $20 billion funding, according to PitchBook data, and only an estimated $3.7 billion in revenue in 2024 plus losses of $5 billion, according to leaked documents obtained by The New York Times in September), OpenAI still faces a long road to prove that it can deliver a return on the unprecedented volumes of capital plowed into the company. And that’s without even getting into the growing concern that improvements in AI models are slowing.

Onstage at the Ray Summit in October, Weil shrugged off the threat of competition. When asked about whether the current gap in quality between open-source models and OpenAI’s premium AI products will shrink, he quipped: “I mean, we’re certainly going to do our best to make it grow.”

In theory, a proven leader like Weil could help guide the operation past the power struggles and talent losses toward a more steady state. By all accounts, he’s a deft conciliator who can empathize with the needs and concerns of multiple stakeholders.

“He’s somewhat of a diplomat,” said Jacobs Stanton, his former Twitter colleague.

At Facebook, where Weil helped develop a stablecoin backed by a basket of international currencies, he had to mediate between crypto natives, the product purists who wanted to take a more user-friendly, less decentralized approach, and policymakers, according to two former Facebook colleagues. The project ultimately couldn’t overcome regulatory roadblocks and an exodus of corporate partners; Meta shuttered the project in 2022, selling $182 million worth of assets to Silvergate Bank.

Horacio Villalobos/Corbis via Getty Images

How Weil’s experience maps onto his current role remains to be seen. With its several thousand employees, regulators scrutinizing its every move, and 300 million weekly active users of ChatGPT, OpenAI is more complex than any company Weil’s stepped into before. In just six months since his arrival, OpenAI has rolled out a large language model that can solve more complex problems via a process the company calls “reasoning,” and a search engine within ChatGPT.

Additionally, OpenAI launched a voice mode to talk to ChatGPT, which Weil personally tested as a “universal translator” during recent trips to Seoul and Tokyo. Reflecting on the release, Weil shared on LinkedIn, “It feels normal to me now, but two years ago I wouldn’t have believed it was possible.”

Last week OpenAI announced an ambitious “12 days of shipmas,” a festive product sprint likely to keep Weil working long hours. While he’s managed to keep the proverbial plates spinning in his professional life, there’s been one noticeable casualty: his workout routine. App data from Strava shows that he’s been logging fewer hours of cycling and running each month since he joined OpenAI.

Asked about Weil’s exercise, OpenAI spokesperson Niko Felix said Weil recorded 96 minutes of physical activity a day in November. “I would say he’s doing quite alright,” Felix said.

Melia Russell is a senior correspondent at Business Insider, covering startups and venture capital. Her Signal number is +1 603-913-3085, and her email is [email protected].

Rob Price is a senior correspondent for Business Insider and writes features and investigations about the technology industry. His Signal number is +1 650-636-6268, and his email is [email protected].

Noticias

5 indicaciones de chatgpt que pueden ayudar a los adolescentes a lanzar una startup

Published

10 meses agoon

5 junio, 2025

Teen emprendedor que usa chatgpt para ayudarlo con su negocio

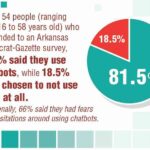

El emprendimiento adolescente sigue en aumento. Según Junior Achievement Research, el 66% de los adolescentes estadounidenses de entre 13 y 17 años dicen que es probable que considere comenzar un negocio como adultos, con el monitor de emprendimiento global 2023-2024 que encuentra que el 24% de los jóvenes de 18 a 24 años son actualmente empresarios. Estos jóvenes fundadores no son solo soñando, están construyendo empresas reales que generan ingresos y crean un impacto social, y están utilizando las indicaciones de ChatGPT para ayudarlos.

En Wit (lo que sea necesario), la organización que fundó en 2009, hemos trabajado con más de 10,000 jóvenes empresarios. Durante el año pasado, he observado un cambio en cómo los adolescentes abordan la planificación comercial. Con nuestra orientación, están utilizando herramientas de IA como ChatGPT, no como atajos, sino como socios de pensamiento estratégico para aclarar ideas, probar conceptos y acelerar la ejecución.

Los emprendedores adolescentes más exitosos han descubierto indicaciones específicas que los ayudan a pasar de una idea a otra. Estas no son sesiones genéricas de lluvia de ideas: están utilizando preguntas específicas que abordan los desafíos únicos que enfrentan los jóvenes fundadores: recursos limitados, compromisos escolares y la necesidad de demostrar sus conceptos rápidamente.

Aquí hay cinco indicaciones de ChatGPT que ayudan constantemente a los emprendedores adolescentes a construir negocios que importan.

1. El problema del primer descubrimiento chatgpt aviso

“Me doy cuenta de que [specific group of people]

luchar contra [specific problem I’ve observed]. Ayúdame a entender mejor este problema explicando: 1) por qué existe este problema, 2) qué soluciones existen actualmente y por qué son insuficientes, 3) cuánto las personas podrían pagar para resolver esto, y 4) tres formas específicas en que podría probar si este es un problema real que vale la pena resolver “.

Un adolescente podría usar este aviso después de notar que los estudiantes en la escuela luchan por pagar el almuerzo. En lugar de asumir que entienden el alcance completo, podrían pedirle a ChatGPT que investigue la deuda del almuerzo escolar como un problema sistémico. Esta investigación puede llevarlos a crear un negocio basado en productos donde los ingresos ayuden a pagar la deuda del almuerzo, lo que combina ganancias con el propósito.

Los adolescentes notan problemas de manera diferente a los adultos porque experimentan frustraciones únicas, desde los desafíos de las organizaciones escolares hasta las redes sociales hasta las preocupaciones ambientales. Según la investigación de Square sobre empresarios de la Generación de la Generación Z, el 84% planea ser dueños de negocios dentro de cinco años, lo que los convierte en candidatos ideales para las empresas de resolución de problemas.

2. El aviso de chatgpt de chatgpt de chatgpt de realidad de la realidad del recurso

“Soy [age] años con aproximadamente [dollar amount] invertir y [number] Horas por semana disponibles entre la escuela y otros compromisos. Según estas limitaciones, ¿cuáles son tres modelos de negocio que podría lanzar de manera realista este verano? Para cada opción, incluya costos de inicio, requisitos de tiempo y los primeros tres pasos para comenzar “.

Este aviso se dirige al elefante en la sala: la mayoría de los empresarios adolescentes tienen dinero y tiempo limitados. Cuando un empresario de 16 años emplea este enfoque para evaluar un concepto de negocio de tarjetas de felicitación, puede descubrir que pueden comenzar con $ 200 y escalar gradualmente. Al ser realistas sobre las limitaciones por adelantado, evitan el exceso de compromiso y pueden construir hacia objetivos de ingresos sostenibles.

Según el informe de Gen Z de Square, el 45% de los jóvenes empresarios usan sus ahorros para iniciar negocios, con el 80% de lanzamiento en línea o con un componente móvil. Estos datos respaldan la efectividad de la planificación basada en restricciones: cuando funcionan los adolescentes dentro de las limitaciones realistas, crean modelos comerciales más sostenibles.

3. El aviso de chatgpt del simulador de voz del cliente

“Actúa como un [specific demographic] Y dame comentarios honestos sobre esta idea de negocio: [describe your concept]. ¿Qué te excitaría de esto? ¿Qué preocupaciones tendrías? ¿Cuánto pagarías de manera realista? ¿Qué necesitaría cambiar para que se convierta en un cliente? “

Los empresarios adolescentes a menudo luchan con la investigación de los clientes porque no pueden encuestar fácilmente a grandes grupos o contratar firmas de investigación de mercado. Este aviso ayuda a simular los comentarios de los clientes haciendo que ChatGPT adopte personas específicas.

Un adolescente que desarrolla un podcast para atletas adolescentes podría usar este enfoque pidiéndole a ChatGPT que responda a diferentes tipos de atletas adolescentes. Esto ayuda a identificar temas de contenido que resuenan y mensajes que se sienten auténticos para el público objetivo.

El aviso funciona mejor cuando se vuelve específico sobre la demografía, los puntos débiles y los contextos. “Actúa como un estudiante de último año de secundaria que solicita a la universidad” produce mejores ideas que “actuar como un adolescente”.

4. El mensaje mínimo de diseñador de prueba viable chatgpt

“Quiero probar esta idea de negocio: [describe concept] sin gastar más de [budget amount] o más de [time commitment]. Diseñe tres experimentos simples que podría ejecutar esta semana para validar la demanda de los clientes. Para cada prueba, explique lo que aprendería, cómo medir el éxito y qué resultados indicarían que debería avanzar “.

Este aviso ayuda a los adolescentes a adoptar la metodología Lean Startup sin perderse en la jerga comercial. El enfoque en “This Week” crea urgencia y evita la planificación interminable sin acción.

Un adolescente que desea probar un concepto de línea de ropa podría usar este indicador para diseñar experimentos de validación simples, como publicar maquetas de diseño en las redes sociales para evaluar el interés, crear un formulario de Google para recolectar pedidos anticipados y pedirles a los amigos que compartan el concepto con sus redes. Estas pruebas no cuestan nada más que proporcionar datos cruciales sobre la demanda y los precios.

5. El aviso de chatgpt del generador de claridad de tono

“Convierta esta idea de negocio en una clara explicación de 60 segundos: [describe your business]. La explicación debe incluir: el problema que resuelve, su solución, a quién ayuda, por qué lo elegirían sobre las alternativas y cómo se ve el éxito. Escríbelo en lenguaje de conversación que un adolescente realmente usaría “.

La comunicación clara separa a los empresarios exitosos de aquellos con buenas ideas pero una ejecución deficiente. Este aviso ayuda a los adolescentes a destilar conceptos complejos a explicaciones convincentes que pueden usar en todas partes, desde las publicaciones en las redes sociales hasta las conversaciones con posibles mentores.

El énfasis en el “lenguaje de conversación que un adolescente realmente usaría” es importante. Muchas plantillas de lanzamiento comercial suenan artificiales cuando se entregan jóvenes fundadores. La autenticidad es más importante que la jerga corporativa.

Más allá de las indicaciones de chatgpt: estrategia de implementación

La diferencia entre los adolescentes que usan estas indicaciones de manera efectiva y aquellos que no se reducen a seguir. ChatGPT proporciona dirección, pero la acción crea resultados.

Los jóvenes empresarios más exitosos con los que trabajo usan estas indicaciones como puntos de partida, no de punto final. Toman las sugerencias generadas por IA e inmediatamente las prueban en el mundo real. Llaman a clientes potenciales, crean prototipos simples e iteran en función de los comentarios reales.

Investigaciones recientes de Junior Achievement muestran que el 69% de los adolescentes tienen ideas de negocios, pero se sienten inciertos sobre el proceso de partida, con el miedo a que el fracaso sea la principal preocupación para el 67% de los posibles empresarios adolescentes. Estas indicaciones abordan esa incertidumbre al desactivar los conceptos abstractos en los próximos pasos concretos.

La imagen más grande

Los emprendedores adolescentes que utilizan herramientas de IA como ChatGPT representan un cambio en cómo está ocurriendo la educación empresarial. Según la investigación mundial de monitores empresariales, los jóvenes empresarios tienen 1,6 veces más probabilidades que los adultos de querer comenzar un negocio, y son particularmente activos en la tecnología, la alimentación y las bebidas, la moda y los sectores de entretenimiento. En lugar de esperar clases de emprendimiento formales o programas de MBA, estos jóvenes fundadores están accediendo a herramientas de pensamiento estratégico de inmediato.

Esta tendencia se alinea con cambios más amplios en la educación y la fuerza laboral. El Foro Económico Mundial identifica la creatividad, el pensamiento crítico y la resiliencia como las principales habilidades para 2025, la capacidad de las capacidades que el espíritu empresarial desarrolla naturalmente.

Programas como WIT brindan soporte estructurado para este viaje, pero las herramientas en sí mismas se están volviendo cada vez más accesibles. Un adolescente con acceso a Internet ahora puede acceder a recursos de planificación empresarial que anteriormente estaban disponibles solo para empresarios establecidos con presupuestos significativos.

La clave es usar estas herramientas cuidadosamente. ChatGPT puede acelerar el pensamiento y proporcionar marcos, pero no puede reemplazar el arduo trabajo de construir relaciones, crear productos y servir a los clientes. La mejor idea de negocio no es la más original, es la que resuelve un problema real para personas reales. Las herramientas de IA pueden ayudar a identificar esas oportunidades, pero solo la acción puede convertirlos en empresas que importan.

Noticias

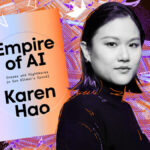

Chatgpt vs. gemini: he probado ambos, y uno definitivamente es mejor

Published

10 meses agoon

5 junio, 2025

Precio

ChatGPT y Gemini tienen versiones gratuitas que limitan su acceso a características y modelos. Los planes premium para ambos también comienzan en alrededor de $ 20 por mes. Las características de chatbot, como investigaciones profundas, generación de imágenes y videos, búsqueda web y más, son similares en ChatGPT y Gemini. Sin embargo, los planes de Gemini pagados también incluyen el almacenamiento en la nube de Google Drive (a partir de 2TB) y un conjunto robusto de integraciones en las aplicaciones de Google Workspace.

Los niveles de más alta gama de ChatGPT y Gemini desbloquean el aumento de los límites de uso y algunas características únicas, pero el costo mensual prohibitivo de estos planes (como $ 200 para Chatgpt Pro o $ 250 para Gemini Ai Ultra) los pone fuera del alcance de la mayoría de las personas. Las características específicas del plan Pro de ChatGPT, como el modo O1 Pro que aprovecha el poder de cálculo adicional para preguntas particularmente complicadas, no son especialmente relevantes para el consumidor promedio, por lo que no sentirá que se está perdiendo. Sin embargo, es probable que desee las características que son exclusivas del plan Ai Ultra de Gemini, como la generación de videos VEO 3.

Ganador: Géminis

Plataformas

Puede acceder a ChatGPT y Gemini en la web o a través de aplicaciones móviles (Android e iOS). ChatGPT también tiene aplicaciones de escritorio (macOS y Windows) y una extensión oficial para Google Chrome. Gemini no tiene aplicaciones de escritorio dedicadas o una extensión de Chrome, aunque se integra directamente con el navegador.

(Crédito: OpenAI/PCMAG)

Chatgpt está disponible en otros lugares, Como a través de Siri. Como se mencionó, puede acceder a Gemini en las aplicaciones de Google, como el calendario, Documento, ConducirGmail, Mapas, Mantener, FotosSábanas, y Música de YouTube. Tanto los modelos de Chatgpt como Gemini también aparecen en sitios como la perplejidad. Sin embargo, obtiene la mayor cantidad de funciones de estos chatbots en sus aplicaciones y portales web dedicados.

Las interfaces de ambos chatbots son en gran medida consistentes en todas las plataformas. Son fáciles de usar y no lo abruman con opciones y alternar. ChatGPT tiene algunas configuraciones más para jugar, como la capacidad de ajustar su personalidad, mientras que la profunda interfaz de investigación de Gemini hace un mejor uso de los bienes inmuebles de pantalla.

Ganador: empate

Modelos de IA

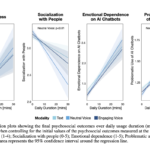

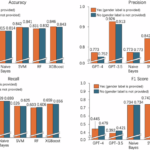

ChatGPT tiene dos series primarias de modelos, la serie 4 (su línea de conversación, insignia) y la Serie O (su compleja línea de razonamiento). Gemini ofrece de manera similar una serie Flash de uso general y una serie Pro para tareas más complicadas.

Los últimos modelos de Chatgpt son O3 y O4-Mini, y los últimos de Gemini son 2.5 Flash y 2.5 Pro. Fuera de la codificación o la resolución de una ecuación, pasará la mayor parte de su tiempo usando los modelos de la serie 4-Series y Flash. A continuación, puede ver cómo funcionan estos modelos en una variedad de tareas. Qué modelo es mejor depende realmente de lo que quieras hacer.

Ganador: empate

Búsqueda web

ChatGPT y Gemini pueden buscar información actualizada en la web con facilidad. Sin embargo, ChatGPT presenta mosaicos de artículos en la parte inferior de sus respuestas para una lectura adicional, tiene un excelente abastecimiento que facilita la vinculación de reclamos con evidencia, incluye imágenes en las respuestas cuando es relevante y, a menudo, proporciona más detalles en respuesta. Gemini no muestra nombres de fuente y títulos de artículos completos, e incluye mosaicos e imágenes de artículos solo cuando usa el modo AI de Google. El abastecimiento en este modo es aún menos robusto; Google relega las fuentes a los caretes que se pueden hacer clic que no resaltan las partes relevantes de su respuesta.

Como parte de sus experiencias de búsqueda en la web, ChatGPT y Gemini pueden ayudarlo a comprar. Si solicita consejos de compra, ambos presentan mosaicos haciendo clic en enlaces a los minoristas. Sin embargo, Gemini generalmente sugiere mejores productos y tiene una característica única en la que puede cargar una imagen tuya para probar digitalmente la ropa antes de comprar.

Ganador: chatgpt

Investigación profunda

ChatGPT y Gemini pueden generar informes que tienen docenas de páginas e incluyen más de 50 fuentes sobre cualquier tema. La mayor diferencia entre los dos se reduce al abastecimiento. Gemini a menudo cita más fuentes que CHATGPT, pero maneja el abastecimiento en informes de investigación profunda de la misma manera que lo hace en la búsqueda en modo AI, lo que significa caretas que se puede hacer clic sin destacados en el texto. Debido a que es más difícil conectar las afirmaciones en los informes de Géminis a fuentes reales, es más difícil creerles. El abastecimiento claro de ChatGPT con destacados en el texto es más fácil de confiar. Sin embargo, Gemini tiene algunas características de calidad de vida en ChatGPT, como la capacidad de exportar informes formateados correctamente a Google Docs con un solo clic. Su tono también es diferente. Los informes de ChatGPT se leen como publicaciones de foro elaboradas, mientras que los informes de Gemini se leen como documentos académicos.

Ganador: chatgpt

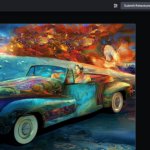

Generación de imágenes

La generación de imágenes de ChatGPT impresiona independientemente de lo que solicite, incluso las indicaciones complejas para paneles o diagramas cómicos. No es perfecto, pero los errores y la distorsión son mínimos. Gemini genera imágenes visualmente atractivas más rápido que ChatGPT, pero rutinariamente incluyen errores y distorsión notables. Con indicaciones complicadas, especialmente diagramas, Gemini produjo resultados sin sentido en las pruebas.

Arriba, puede ver cómo ChatGPT (primera diapositiva) y Géminis (segunda diapositiva) les fue con el siguiente mensaje: “Genere una imagen de un estudio de moda con una decoración simple y rústica que contrasta con el espacio más agradable. Incluya un sofá marrón y paredes de ladrillo”. La imagen de ChatGPT limita los problemas al detalle fino en las hojas de sus plantas y texto en su libro, mientras que la imagen de Gemini muestra problemas más notables en su tubo de cordón y lámpara.

Ganador: chatgpt

¡Obtenga nuestras mejores historias!

Toda la última tecnología, probada por nuestros expertos

Regístrese en el boletín de informes de laboratorio para recibir las últimas revisiones de productos de PCMAG, comprar asesoramiento e ideas.

Al hacer clic en Registrarme, confirma que tiene más de 16 años y acepta nuestros Términos de uso y Política de privacidad.

¡Gracias por registrarse!

Su suscripción ha sido confirmada. ¡Esté atento a su bandeja de entrada!

Generación de videos

La generación de videos de Gemini es la mejor de su clase, especialmente porque ChatGPT no puede igualar su capacidad para producir audio acompañante. Actualmente, Google bloquea el último modelo de generación de videos de Gemini, VEO 3, detrás del costoso plan AI Ultra, pero obtienes más videos realistas que con ChatGPT. Gemini también tiene otras características que ChatGPT no, como la herramienta Flow Filmmaker, que le permite extender los clips generados y el animador AI Whisk, que le permite animar imágenes fijas. Sin embargo, tenga en cuenta que incluso con VEO 3, aún necesita generar videos varias veces para obtener un gran resultado.

En el ejemplo anterior, solicité a ChatGPT y Gemini a mostrarme un solucionador de cubos de Rubik Rubik que resuelva un cubo. La persona en el video de Géminis se ve muy bien, y el audio acompañante es competente. Al final, hay una buena atención al detalle con el marco que se desplaza, simulando la detención de una grabación de selfies. Mientras tanto, Chatgpt luchó con su cubo, distorsionándolo en gran medida.

Ganador: Géminis

Procesamiento de archivos

Comprender los archivos es una fortaleza de ChatGPT y Gemini. Ya sea que desee que respondan preguntas sobre un manual, editen un currículum o le informen algo sobre una imagen, ninguno decepciona. Sin embargo, ChatGPT tiene la ventaja sobre Gemini, ya que ofrece un reconocimiento de imagen ligeramente mejor y respuestas más detalladas cuando pregunta sobre los archivos cargados. Ambos chatbots todavía a veces inventan citas de documentos proporcionados o malinterpretan las imágenes, así que asegúrese de verificar sus resultados.

Ganador: chatgpt

Escritura creativa

Chatgpt y Gemini pueden generar poemas, obras, historias y más competentes. CHATGPT, sin embargo, se destaca entre los dos debido a cuán únicas son sus respuestas y qué tan bien responde a las indicaciones. Las respuestas de Gemini pueden sentirse repetitivas si no calibra cuidadosamente sus solicitudes, y no siempre sigue todas las instrucciones a la carta.

En el ejemplo anterior, solicité ChatGPT (primera diapositiva) y Gemini (segunda diapositiva) con lo siguiente: “Sin hacer referencia a nada en su memoria o respuestas anteriores, quiero que me escriba un poema de verso gratuito. Preste atención especial a la capitalización, enjambment, ruptura de línea y puntuación. Dado que es un verso libre, no quiero un medidor familiar o un esquema de retiro de la rima, pero quiero que tenga un estilo de coohes. ChatGPT logró entregar lo que pedí en el aviso, y eso era distinto de las generaciones anteriores. Gemini tuvo problemas para generar un poema que incorporó cualquier cosa más allá de las comas y los períodos, y su poema anterior se lee de manera muy similar a un poema que generó antes.

Recomendado por nuestros editores

Ganador: chatgpt

Razonamiento complejo

Los modelos de razonamiento complejos de Chatgpt y Gemini pueden manejar preguntas de informática, matemáticas y física con facilidad, así como mostrar de manera competente su trabajo. En las pruebas, ChatGPT dio respuestas correctas un poco más a menudo que Gemini, pero su rendimiento es bastante similar. Ambos chatbots pueden y le darán respuestas incorrectas, por lo que verificar su trabajo aún es vital si está haciendo algo importante o tratando de aprender un concepto.

Ganador: chatgpt

Integración

ChatGPT no tiene integraciones significativas, mientras que las integraciones de Gemini son una característica definitoria. Ya sea que desee obtener ayuda para editar un ensayo en Google Docs, comparta una pestaña Chrome para hacer una pregunta, pruebe una nueva lista de reproducción de música de YouTube personalizada para su gusto o desbloquee ideas personales en Gmail, Gemini puede hacer todo y mucho más. Es difícil subestimar cuán integrales y poderosas son realmente las integraciones de Géminis.

Ganador: Géminis

Asistentes de IA

ChatGPT tiene GPT personalizados, y Gemini tiene gemas. Ambos son asistentes de IA personalizables. Tampoco es una gran actualización sobre hablar directamente con los chatbots, pero los GPT personalizados de terceros agregan una nueva funcionalidad, como el fácil acceso a Canva para editar imágenes generadas. Mientras tanto, terceros no pueden crear gemas, y no puedes compartirlas. Puede permitir que los GPT personalizados accedan a la información externa o tomen acciones externas, pero las GEM no tienen una funcionalidad similar.

Ganador: chatgpt

Contexto Windows y límites de uso

La ventana de contexto de ChatGPT sube a 128,000 tokens en sus planes de nivel superior, y todos los planes tienen límites de uso dinámicos basados en la carga del servidor. Géminis, por otro lado, tiene una ventana de contexto de 1,000,000 token. Google no está demasiado claro en los límites de uso exactos para Gemini, pero también son dinámicos dependiendo de la carga del servidor. Anecdóticamente, no pude alcanzar los límites de uso usando los planes pagados de Chatgpt o Gemini, pero es mucho más fácil hacerlo con los planes gratuitos.

Ganador: Géminis

Privacidad

La privacidad en Chatgpt y Gemini es una bolsa mixta. Ambos recopilan cantidades significativas de datos, incluidos todos sus chats, y usan esos datos para capacitar a sus modelos de IA de forma predeterminada. Sin embargo, ambos le dan la opción de apagar el entrenamiento. Google al menos no recopila y usa datos de Gemini para fines de capacitación en aplicaciones de espacio de trabajo, como Gmail, de forma predeterminada. ChatGPT y Gemini también prometen no vender sus datos o usarlos para la orientación de anuncios, pero Google y OpenAI tienen historias sórdidas cuando se trata de hacks, filtraciones y diversos fechorías digitales, por lo que recomiendo no compartir nada demasiado sensible.

Ganador: empate

Related posts

Trending

-

Startups2 años ago

Startups2 años agoRemove.bg: La Revolución en la Edición de Imágenes que Debes Conocer

-

Tutoriales2 años ago

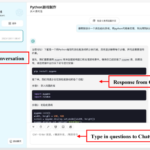

Tutoriales2 años agoCómo Comenzar a Utilizar ChatGPT: Una Guía Completa para Principiantes

-

Startups2 años ago

Startups2 años agoDeepgram: Revolucionando el Reconocimiento de Voz con IA

-

Startups2 años ago

Startups2 años agoStartups de IA en EE.UU. que han recaudado más de $100M en 2024

-

Recursos2 años ago

Recursos2 años agoCómo Empezar con Popai.pro: Tu Espacio Personal de IA – Guía Completa, Instalación, Versiones y Precios

-

Recursos2 años ago

Recursos2 años agoPerplexity aplicado al Marketing Digital y Estrategias SEO

-

Estudiar IA2 años ago

Estudiar IA2 años agoCurso de Inteligencia Artificial Aplicada de 4Geeks Academy 2024

-

Noticias2 años ago

Noticias2 años agoDos periodistas octogenarios deman a ChatGPT por robar su trabajo