Noticias

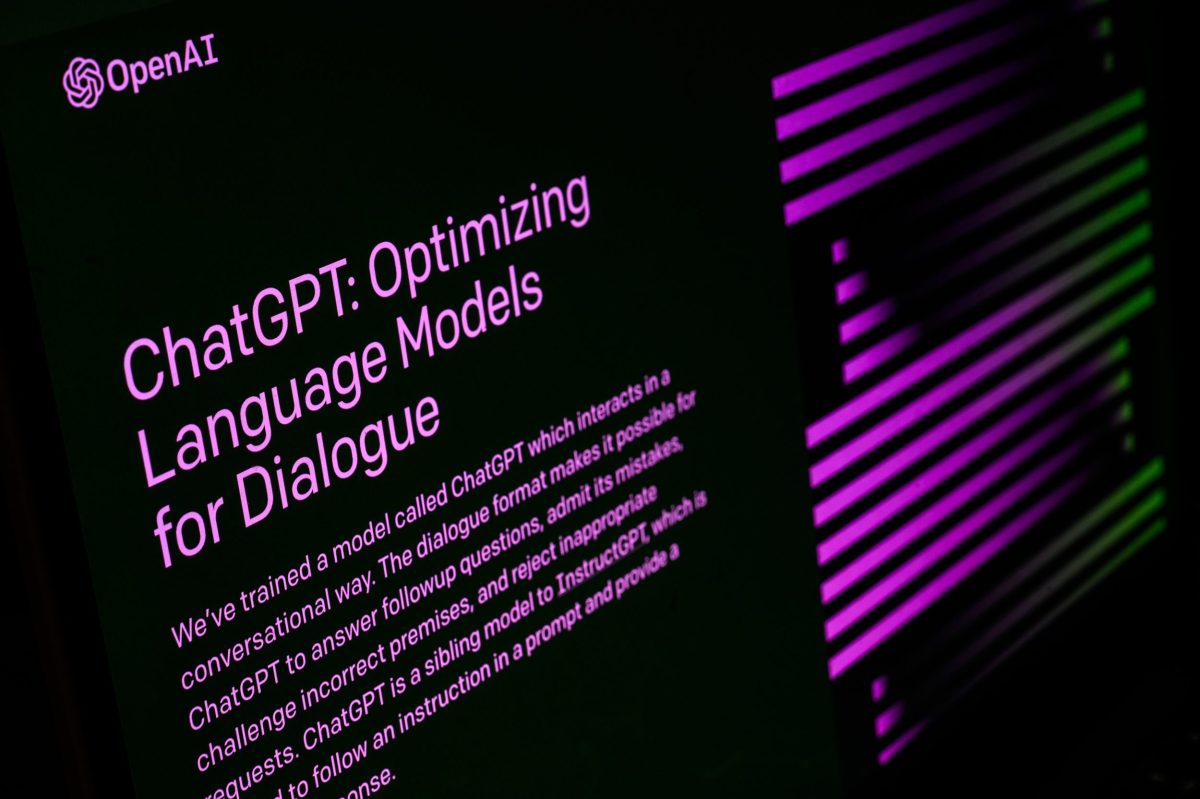

Everything you need to know about the AI chatbot

Published

11 meses agoon

ChatGPT, OpenAI’s text-generating AI chatbot, has taken the world by storm since its launch in November 2022. What started as a tool to supercharge productivity through writing essays and code with short text prompts has evolved into a behemoth with 300 million weekly active users.

2024 was a big year for OpenAI, from its partnership with Apple for its generative AI offering, Apple Intelligence, the release of GPT-4o with voice capabilities, and the highly-anticipated launch of its text-to-video model Sora.

OpenAI also faced its share of internal drama, including the notable exits of high-level execs like co-founder and longtime chief scientist Ilya Sutskever and CTO Mira Murati. OpenAI has also been hit with lawsuits from Alden Global Capital-owned newspapers alleging copyright infringement, as well as an injunction from Elon Musk to halt OpenAI’s transition to a for-profit.

In 2025, OpenAI is battling the perception that it’s ceding ground in the AI race to Chinese rivals like DeepSeek. The company has been trying to shore up its relationship with Washington as it simultaneously pursues an ambitious data center project, and as it reportedly lays the groundwork for one of the largest funding rounds in history.

Below, you’ll find a timeline of ChatGPT product updates and releases, starting with the latest, which we’ve been updating throughout the year. If you have any other questions, check out our ChatGPT FAQ here.

To see a list of 2024 updates, go here.

Timeline of the most recent ChatGPT updates

April 2025

OpenAI wants its AI model to access cloud models for assistance

OpenAI leaders have been talking about allowing the open model to link up with OpenAI’s cloud-hosted models to improve its ability to respond to intricate questions, two sources familiar with the situation told TechCrunch.

OpenAI aims to make its new “open” AI model the best on the market

OpenAI is preparing to launch an AI system that will be openly accessible, allowing users to download it for free without any API restrictions. Aidan Clark, OpenAI’s VP of research, is spearheading the development of the open model, which is in the very early stages, sources familiar with the situation told TechCrunch.

OpenAI’s GPT-4.1 may be less aligned than earlier models

OpenAI released a new AI model called GPT-4.1 in mid-April. However, multiple independent tests indicate that the model is less reliable than previous OpenAI releases. The company skipped that step — sending safety cards for GPT-4.1 — claiming in a statement to TechCrunch that “GPT-4.1 is not a frontier model, so there won’t be a separate system card released for it.”

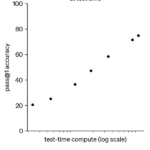

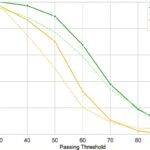

OpenAI’s o3 AI model scored lower than expected on a benchmark

Questions have been raised regarding OpenAI’s transparency and procedures for testing models after a difference in benchmark outcomes was detected by first- and third-party benchmark results for the o3 AI model. OpenAI introduced o3 in December, stating that the model could solve approximately 25% of questions on FrontierMath, a difficult math problem set. Epoch AI, the research institute behind FrontierMath, discovered that o3 achieved a score of approximately 10%, which was significantly lower than OpenAI’s top-reported score.

OpenAI unveils Flex processing for cheaper, slower AI tasks

OpenAI has launched a new API feature called Flex processing that allows users to use AI models at a lower cost but with slower response times and occasional resource unavailability. Flex processing is available in beta on the o3 and o4-mini reasoning models for non-production tasks like model evaluations, data enrichment, and asynchronous workloads.

OpenAI’s latest AI models now have a safeguard against biorisks

OpenAI has rolled out a new system to monitor its AI reasoning models, o3 and o4 mini, for biological and chemical threats. The system is designed to prevent models from giving advice that could potentially lead to harmful attacks, as stated in OpenAI’s safety report.

OpenAI launches its latest reasoning models, o3 and o4-mini

OpenAI has released two new reasoning models, o3 and o4 mini, just two days after launching GPT-4.1. The company claims o3 is the most advanced reasoning model it has developed, while o4-mini is said to provide a balance of price, speed, and performance. The new models stand out from previous reasoning models because they can use ChatGPT features like web browsing, coding, and image processing and generation. But they hallucinate more than several of OpenAI’s previous models.

OpenAI has added a new section to ChatGPT to offer easier access to AI-generated images for all user tiers

Open AI introduced a new section called “library” to make it easier for users to create images on mobile and web platforms, per the company’s X post.

OpenAI could “adjust” its safeguards if rivals release “high-risk” AI

OpenAI said on Tuesday that it might revise its safety standards if “another frontier AI developer releases a high-risk system without comparable safeguards.” The move shows how commercial AI developers face more pressure to rapidly implement models due to the increased competition.

OpenAI is building its own social media network

OpenAI is currently in the early stages of developing its own social media platform to compete with Elon Musk’s X and Mark Zuckerberg’s Instagram and Threads, according to The Verge. It is unclear whether OpenAI intends to launch the social network as a standalone application or incorporate it into ChatGPT.

OpenAI will remove its largest AI model, GPT-4.5, from the API, in July

OpenAI will discontinue its largest AI model, GPT-4.5, from its API even though it was just launched in late February. GPT-4.5 will be available in a research preview for paying customers. Developers can use GPT-4.5 through OpenAI’s API until July 14; then, they will need to switch to GPT-4.1, which was released on April 14.

OpenAI unveils GPT-4.1 AI models that focus on coding capabilities

OpenAI has launched three members of the GPT-4.1 model — GPT-4.1, GPT-4.1 mini, and GPT-4.1 nano — with a specific focus on coding capabilities. It’s accessible via the OpenAI API but not ChatGPT. In the competition to develop advanced programming models, GPT-4.1 will rival AI models such as Google’s Gemini 2.5 Pro, Anthropic’s Claude 3.7 Sonnet, and DeepSeek’s upgraded V3.

OpenAI will discontinue ChatGPT’s GPT-4 at the end of April

OpenAI plans to sunset GPT-4, an AI model introduced more than two years ago, and replace it with GPT-4o, the current default model, per changelog. It will take effect on April 30. GPT-4 will remain available via OpenAI’s API.

OpenAI could release GPT-4.1 soon

OpenAI may launch several new AI models, including GPT-4.1, soon, The Verge reported, citing anonymous sources. GPT-4.1 would be an update of OpenAI’s GPT-4o, which was released last year. On the list of upcoming models are GPT-4.1 and smaller versions like GPT-4.1 mini and nano, per the report.

OpenAI has updated ChatGPT to use information from your previous conversations

OpenAI started updating ChatGPT to enable the chatbot to remember previous conversations with a user and customize its responses based on that context. This feature is rolling out to ChatGPT Pro and Plus users first, excluding those in the U.K., EU, Iceland, Liechtenstein, Norway, and Switzerland.

OpenAI is working on watermarks for images made with ChatGPT

It looks like OpenAI is working on a watermarking feature for images generated using GPT-4o. AI researcher Tibor Blaho spotted a new “ImageGen” watermark feature in the new beta of ChatGPT’s Android app. Blaho also found mentions of other tools: “Structured Thoughts,” “Reasoning Recap,” “CoT Search Tool,” and “l1239dk1.”

OpenAI offers ChatGPT Plus for free to U.S., Canadian college students

OpenAI is offering its $20-per-month ChatGPT Plus subscription tier for free to all college students in the U.S. and Canada through the end of May. The offer will let millions of students use OpenAI’s premium service, which offers access to the company’s GPT-4o model, image generation, voice interaction, and research tools that are not available in the free version.

ChatGPT users have generated over 700M images so far

More than 130 million users have created over 700 million images since ChatGPT got the upgraded image generator on March 25, according to COO of OpenAI Brad Lightcap. The image generator was made available to all ChatGPT users on March 31, and went viral for being able to create Ghibli-style photos.

OpenAI’s o3 model could cost more to run than initial estimate

The Arc Prize Foundation, which develops the AI benchmark tool ARC-AGI, has updated the estimated computing costs for OpenAI’s o3 “reasoning” model managed by ARC-AGI. The organization originally estimated that the best-performing configuration of o3 it tested, o3 high, would cost approximately $3,000 to address a single problem. The Foundation now thinks the cost could be much higher, possibly around $30,000 per task.

OpenAI CEO says capacity issues will cause product delays

In a series of posts on X, OpenAI CEO Sam Altman said the company’s new image-generation tool’s popularity may cause product releases to be delayed. “We are getting things under control, but you should expect new releases from OpenAI to be delayed, stuff to break, and for service to sometimes be slow as we deal with capacity challenges,” he wrote.

March 2025

OpenAI plans to release a new ‘open’ AI language model

OpeanAI intends to release its “first” open language model since GPT-2 “in the coming months.” The company plans to host developer events to gather feedback and eventually showcase prototypes of the model. The first developer event is to be held in San Francisco, with sessions to follow in Europe and Asia.

OpenAI removes ChatGPT’s restrictions on image generation

OpenAI made a notable change to its content moderation policies after the success of its new image generator in ChatGPT, which went viral for being able to create Studio Ghibli-style images. The company has updated its policies to allow ChatGPT to generate images of public figures, hateful symbols, and racial features when requested. OpenAI had previously declined such prompts due to the potential controversy or harm they may cause. However, the company has now “evolved” its approach, as stated in a blog post published by Joanne Jang, the lead for OpenAI’s model behavior.

OpenAI adopts Anthropic’s standard for linking AI models with data

OpenAI wants to incorporate Anthropic’s Model Context Protocol (MCP) into all of its products, including the ChatGPT desktop app. MCP, an open-source standard, helps AI models generate more accurate and suitable responses to specific queries, and lets developers create bidirectional links between data sources and AI applications like chatbots. The protocol is currently available in the Agents SDK, and support for the ChatGPT desktop app and Responses API will be coming soon, OpenAI CEO Sam Altman said.

OpenAI’s viral Studio Ghibli-style images could raise AI copyright concerns

The latest update of the image generator on OpenAI’s ChatGPT has triggered a flood of AI-generated memes in the style of Studio Ghibli, the Japanese animation studio behind blockbuster films like “My Neighbor Totoro” and “Spirited Away.” The burgeoning mass of Ghibli-esque images have sparked concerns about whether OpenAI has violated copyright laws, especially since the company is already facing legal action for using source material without authorization.

OpenAI expects revenue to triple to $12.7 billion this year

OpenAI expects its revenue to triple to $12.7 billion in 2025, fueled by the performance of its paid AI software, Bloomberg reported, citing an anonymous source. While the startup doesn’t expect to reach positive cash flow until 2029, it expects revenue to increase significantly in 2026 to surpass $29.4 billion, the report said.

ChatGPT has upgraded its image-generation feature

OpenAI on Tuesday rolled out a major upgrade to ChatGPT’s image-generation capabilities: ChatGPT can now use the GPT-4o model to generate and edit images and photos directly. The feature went live earlier this week in ChatGPT and Sora, OpenAI’s AI video-generation tool, for subscribers of the company’s Pro plan, priced at $200 a month, and will be available soon to ChatGPT Plus subscribers and developers using the company’s API service. The company’s CEO Sam Altman said on Wednesday, however, that the release of the image generation feature to free users would be delayed due to higher demand than the company expected.

OpenAI announces leadership updates

Brad Lightcap, OpenAI’s chief operating officer, will lead the company’s global expansion and manage corporate partnerships as CEO Sam Altman shifts his focus to research and products, according to a blog post from OpenAI. Lightcap, who previously worked with Altman at Y Combinator, joined the Microsoft-backed startup in 2018. OpenAI also said Mark Chen would step into the expanded role of chief research officer, and Julia Villagra will take on the role of chief people officer.

OpenAI’s AI voice assistant now has advanced feature

OpenAI has updated its AI voice assistant with improved chatting capabilities, according to a video posted on Monday (March 24) to the company’s official media channels. The update enables real-time conversations, and the AI assistant is said to be more personable and interrupts users less often. Users on ChatGPT’s free tier can now access the new version of Advanced Voice Mode, while paying users will receive answers that are “more direct, engaging, concise, specific, and creative,” a spokesperson from OpenAI told TechCrunch.

OpenAI, Meta in talks with Reliance in India

OpenAI and Meta have separately engaged in discussions with Indian conglomerate Reliance Industries regarding potential collaborations to enhance their AI services in the country, per a report by The Information. One key topic being discussed is Reliance Jio distributing OpenAI’s ChatGPT. Reliance has proposed selling OpenAI’s models to businesses in India through an application programming interface (API) so they can incorporate AI into their operations. Meta also plans to bolster its presence in India by constructing a large 3GW data center in Jamnagar, Gujarat. OpenAI, Meta, and Reliance have not yet officially announced these plans.

OpenAI faces privacy complaint in Europe for chatbot’s defamatory hallucinations

Noyb, a privacy rights advocacy group, is supporting an individual in Norway who was shocked to discover that ChatGPT was providing false information about him, stating that he had been found guilty of killing two of his children and trying to harm the third. “The GDPR is clear. Personal data has to be accurate,” said Joakim Söderberg, data protection lawyer at Noyb, in a statement. “If it’s not, users have the right to have it changed to reflect the truth. Showing ChatGPT users a tiny disclaimer that the chatbot can make mistakes clearly isn’t enough. You can’t just spread false information and in the end add a small disclaimer saying that everything you said may just not be true.”

OpenAI upgrades its transcription and voice-generating AI models

OpenAI has added new transcription and voice-generating AI models to its APIs: a text-to-speech model, “gpt-4o-mini-tts,” that delivers more nuanced and realistic sounding speech, as well as two speech-to-text models called “gpt-4o-transcribe” and “gpt-4o-mini-transcribe”. The company claims they are improved versions of what was already there and that they hallucinate less.

OpenAI has launched o1-pro, a more powerful version of its o1

OpenAI has introduced o1-pro in its developer API. OpenAI says its o1-pro uses more computing than its o1 “reasoning” AI model to deliver “consistently better responses.” It’s only accessible to select developers who have spent at least $5 on OpenAI API services. OpenAI charges $150 for every million tokens (about 750,000 words) input into the model and $600 for every million tokens the model produces. It costs twice as much as OpenAI’s GPT-4.5 for input and 10 times the price of regular o1.

OpenAI research lead Noam Brown thinks AI “reasoning” models could’ve arrived decades ago

Noam Brown, who heads AI reasoning research at OpenAI, thinks that certain types of AI models for “reasoning” could have been developed 20 years ago if researchers had understood the correct approach and algorithms.

OpenAI says it has trained an AI that’s “really good” at creative writing

OpenAI CEO Sam Altman said, in a post on X, that the company has trained a “new model” that’s “really good” at creative writing. He posted a lengthy sample from the model given the prompt “Please write a metafictional literary short story about AI and grief.” OpenAI has not extensively explored the use of AI for writing fiction. The company has mostly concentrated on challenges in rigid, predictable areas such as math and programming. And it turns out that it might not be that great at creative writing at all.

we trained a new model that is good at creative writing (not sure yet how/when it will get released). this is the first time i have been really struck by something written by AI; it got the vibe of metafiction so right.

PROMPT:

Please write a metafictional literary short story…

— Sam Altman (@sama) March 11, 2025

OpenAI launches new tools to help businesses build AI agents

OpenAI rolled out new tools designed to help developers and businesses build AI agents — automated systems that can independently accomplish tasks — using the company’s own AI models and frameworks. The tools are part of OpenAI’s new Responses API, which enables enterprises to develop customized AI agents that can perform web searches, scan through company files, and navigate websites, similar to OpenAI’s Operator product. The Responses API effectively replaces OpenAI’s Assistants API, which the company plans to discontinue in the first half of 2026.

OpenAI reportedly plans to charge up to $20,000 a month for specialized AI ‘agents’

OpenAI intends to release several “agent” products tailored for different applications, including sorting and ranking sales leads and software engineering, according to a report from The Information. One, a “high-income knowledge worker” agent, will reportedly be priced at $2,000 a month. Another, a software developer agent, is said to cost $10,000 a month. The most expensive rumored agents, which are said to be aimed at supporting “PhD-level research,” are expected to cost $20,000 per month. The jaw-dropping figure is indicative of how much cash OpenAI needs right now: The company lost roughly $5 billion last year after paying for costs related to running its services and other expenses. It’s unclear when these agentic tools might launch or which customers will be eligible to buy them.

ChatGPT can directly edit your code

The latest version of the macOS ChatGPT app allows users to edit code directly in supported developer tools, including Xcode, VS Code, and JetBrains. ChatGPT Plus, Pro, and Team subscribers can use the feature now, and the company plans to roll it out to more users like Enterprise, Edu, and free users.

ChatGPT’s weekly active users doubled in less than 6 months, thanks to new releases

According to a new report from VC firm Andreessen Horowitz (a16z), OpenAI’s AI chatbot, ChatGPT, experienced solid growth in the second half of 2024. It took ChatGPT nine months to increase its weekly active users from 100 million in November 2023 to 200 million in August 2024, but it only took less than six months to double that number once more, according to the report. ChatGPT’s weekly active users increased to 300 million by December 2024 and 400 million by February 2025. ChatGPT has experienced significant growth recently due to the launch of new models and features, such as GPT-4o, with multimodal capabilities. ChatGPT usage spiked from April to May 2024, shortly after that model’s launch.

February 2025

OpenAI cancels its o3 AI model in favor of a ‘unified’ next-gen release

OpenAI has effectively canceled the release of o3 in favor of what CEO Sam Altman is calling a “simplified” product offering. In a post on X, Altman said that, in the coming months, OpenAI will release a model called GPT-5 that “integrates a lot of [OpenAI’s] technology,” including o3, in ChatGPT and its API. As a result of that roadmap decision, OpenAI no longer plans to release o3 as a standalone model.

ChatGPT may not be as power-hungry as once assumed

A commonly cited stat is that ChatGPT requires around 3 watt-hours of power to answer a single question. Using OpenAI’s latest default model for ChatGPT, GPT-4o, as a reference, nonprofit AI research institute Epoch AI found the average ChatGPT query consumes around 0.3 watt-hours. However, the analysis doesn’t consider the additional energy costs incurred by ChatGPT with features like image generation or input processing.

OpenAI now reveals more of its o3-mini model’s thought process

In response to pressure from rivals like DeepSeek, OpenAI is changing the way its o3-mini model communicates its step-by-step “thought” process. ChatGPT users will see an updated “chain of thought” that shows more of the model’s “reasoning” steps and how it arrived at answers to questions.

You can now use ChatGPT web search without logging in

OpenAI is now allowing anyone to use ChatGPT web search without having to log in. While OpenAI had previously allowed users to ask ChatGPT questions without signing in, responses were restricted to the chatbot’s last training update. This only applies through ChatGPT.com, however. To use ChatGPT in any form through the native mobile app, you will still need to be logged in.

OpenAI unveils a new ChatGPT agent for ‘deep research’

OpenAI announced a new AI “agent” called deep research that’s designed to help people conduct in-depth, complex research using ChatGPT. OpenAI says the “agent” is intended for instances where you don’t just want a quick answer or summary, but instead need to assiduously consider information from multiple websites and other sources.

January 2025

OpenAI used a subreddit to test AI persuasion

OpenAI used the subreddit r/ChangeMyView to measure the persuasive abilities of its AI reasoning models. OpenAI says it collects user posts from the subreddit and asks its AI models to write replies, in a closed environment, that would change the Reddit user’s mind on a subject. The company then shows the responses to testers, who assess how persuasive the argument is, and finally OpenAI compares the AI models’ responses to human replies for that same post.

OpenAI launches o3-mini, its latest ‘reasoning’ model

OpenAI launched a new AI “reasoning” model, o3-mini, the newest in the company’s o family of models. OpenAI first previewed the model in December alongside a more capable system called o3. OpenAI is pitching its new model as both “powerful” and “affordable.”

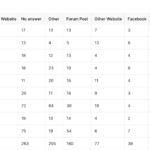

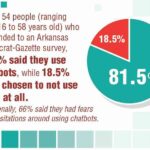

ChatGPT’s mobile users are 85% male, report says

A new report from app analytics firm Appfigures found that over half of ChatGPT’s mobile users are under age 25, with users between ages 50 and 64 making up the second largest age demographic. The gender gap among ChatGPT users is even more significant. Appfigures estimates that across age groups, men make up 84.5% of all users.

OpenAI launches ChatGPT plan for US government agencies

OpenAI launched ChatGPT Gov designed to provide U.S. government agencies an additional way to access the tech. ChatGPT Gov includes many of the capabilities found in OpenAI’s corporate-focused tier, ChatGPT Enterprise. OpenAI says that ChatGPT Gov enables agencies to more easily manage their own security, privacy, and compliance, and could expedite internal authorization of OpenAI’s tools for the handling of non-public sensitive data.

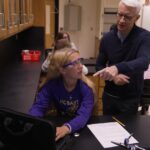

More teens report using ChatGPT for schoolwork, despite the tech’s faults

Younger Gen Zers are embracing ChatGPT, for schoolwork, according to a new survey by the Pew Research Center. In a follow-up to its 2023 poll on ChatGPT usage among young people, Pew asked ~1,400 U.S.-based teens ages 13 to 17 whether they’ve used ChatGPT for homework or other school-related assignments. Twenty-six percent said that they had, double the number two years ago. Just over half of teens responding to the poll said they think it’s acceptable to use ChatGPT for researching new subjects. But considering the ways ChatGPT can fall short, the results are possibly cause for alarm.

OpenAI says it may store deleted Operator data for up to 90 days

OpenAI says that it might store chats and associated screenshots from customers who use Operator, the company’s AI “agent” tool, for up to 90 days — even after a user manually deletes them. While OpenAI has a similar deleted data retention policy for ChatGPT, the retention period for ChatGPT is only 30 days, which is 60 days shorter than Operator’s.

OpenAI launches Operator, an AI agent that performs tasks autonomously

OpenAI is launching a research preview of Operator, a general-purpose AI agent that can take control of a web browser and independently perform certain actions. Operator promises to automate tasks such as booking travel accommodations, making restaurant reservations, and shopping online.

OpenAI may preview its agent tool for users on the $200-per-month Pro plan

Operator, OpenAI’s agent tool, could be released sooner rather than later. Changes to ChatGPT’s code base suggest that Operator will be available as an early research preview to users on the $200 Pro subscription plan. The changes aren’t yet publicly visible, but a user on X who goes by Choi spotted these updates in ChatGPT’s client-side code. TechCrunch separately identified the same references to Operator on OpenAI’s website.

OpenAI tests phone number-only ChatGPT signups

OpenAI has begun testing a feature that lets new ChatGPT users sign up with only a phone number — no email required. The feature is currently in beta in the U.S. and India. However, users who create an account using their number can’t upgrade to one of OpenAI’s paid plans without verifying their account via an email. Multi-factor authentication also isn’t supported without a valid email.

ChatGPT now lets you schedule reminders and recurring tasks

ChatGPT’s new beta feature, called tasks, allows users to set simple reminders. For example, you can ask ChatGPT to remind you when your passport expires in six months, and the AI assistant will follow up with a push notification on whatever platform you have tasks enabled. The feature will start rolling out to ChatGPT Plus, Team, and Pro users around the globe this week.

New ChatGPT feature lets users assign it traits like ‘chatty’ and ‘Gen Z’

OpenAI is introducing a new way for users to customize their interactions with ChatGPT. Some users found they can specify a preferred name or nickname and “traits” they’d like the chatbot to have. OpenAI suggests traits like “Chatty,” “Encouraging,” and “Gen Z.” However, some users reported that the new options have disappeared, so it’s possible they went live prematurely.

FAQs:

What is ChatGPT? How does it work?

ChatGPT is a general-purpose chatbot that uses artificial intelligence to generate text after a user enters a prompt, developed by tech startup OpenAI. The chatbot uses GPT-4, a large language model that uses deep learning to produce human-like text.

When did ChatGPT get released?

November 30, 2022 is when ChatGPT was released for public use.

What is the latest version of ChatGPT?

Both the free version of ChatGPT and the paid ChatGPT Plus are regularly updated with new GPT models. The most recent model is GPT-4o.

Can I use ChatGPT for free?

There is a free version of ChatGPT that only requires a sign-in in addition to the paid version, ChatGPT Plus.

Who uses ChatGPT?

Anyone can use ChatGPT! More and more tech companies and search engines are utilizing the chatbot to automate text or quickly answer user questions/concerns.

What companies use ChatGPT?

Multiple enterprises utilize ChatGPT, although others may limit the use of the AI-powered tool.

Most recently, Microsoft announced at its 2023 Build conference that it is integrating its ChatGPT-based Bing experience into Windows 11. A Brooklyn-based 3D display startup Looking Glass utilizes ChatGPT to produce holograms you can communicate with by using ChatGPT. And nonprofit organization Solana officially integrated the chatbot into its network with a ChatGPT plug-in geared toward end users to help onboard into the web3 space.

What does GPT mean in ChatGPT?

GPT stands for Generative Pre-Trained Transformer.

What is the difference between ChatGPT and a chatbot?

A chatbot can be any software/system that holds dialogue with you/a person but doesn’t necessarily have to be AI-powered. For example, there are chatbots that are rules-based in the sense that they’ll give canned responses to questions.

ChatGPT is AI-powered and utilizes LLM technology to generate text after a prompt.

Can ChatGPT write essays?

Yes.

Can ChatGPT commit libel?

Due to the nature of how these models work, they don’t know or care whether something is true, only that it looks true. That’s a problem when you’re using it to do your homework, sure, but when it accuses you of a crime you didn’t commit, that may well at this point be libel.

We will see how handling troubling statements produced by ChatGPT will play out over the next few months as tech and legal experts attempt to tackle the fastest moving target in the industry.

Does ChatGPT have an app?

Yes, there is a free ChatGPT mobile app for iOS and Android users.

What is the ChatGPT character limit?

It’s not documented anywhere that ChatGPT has a character limit. However, users have noted that there are some character limitations after around 500 words.

Does ChatGPT have an API?

Yes, it was released March 1, 2023.

What are some sample everyday uses for ChatGPT?

Everyday examples include programming, scripts, email replies, listicles, blog ideas, summarization, etc.

What are some advanced uses for ChatGPT?

Advanced use examples include debugging code, programming languages, scientific concepts, complex problem solving, etc.

How good is ChatGPT at writing code?

It depends on the nature of the program. While ChatGPT can write workable Python code, it can’t necessarily program an entire app’s worth of code. That’s because ChatGPT lacks context awareness — in other words, the generated code isn’t always appropriate for the specific context in which it’s being used.

Can you save a ChatGPT chat?

Yes. OpenAI allows users to save chats in the ChatGPT interface, stored in the sidebar of the screen. There are no built-in sharing features yet.

Are there alternatives to ChatGPT?

Yes. There are multiple AI-powered chatbot competitors such as Together, Google’s Gemini and Anthropic’s Claude, and developers are creating open source alternatives.

How does ChatGPT handle data privacy?

OpenAI has said that individuals in “certain jurisdictions” (such as the EU) can object to the processing of their personal information by its AI models by filling out this form. This includes the ability to make requests for deletion of AI-generated references about you. Although OpenAI notes it may not grant every request since it must balance privacy requests against freedom of expression “in accordance with applicable laws”.

The web form for making a deletion of data about you request is entitled “OpenAI Personal Data Removal Request”.

In its privacy policy, the ChatGPT maker makes a passing acknowledgement of the objection requirements attached to relying on “legitimate interest” (LI), pointing users towards more information about requesting an opt out — when it writes: “See here for instructions on how you can opt out of our use of your information to train our models.”

What controversies have surrounded ChatGPT?

Recently, Discord announced that it had integrated OpenAI’s technology into its bot named Clyde where two users tricked Clyde into providing them with instructions for making the illegal drug methamphetamine (meth) and the incendiary mixture napalm.

An Australian mayor has publicly announced he may sue OpenAI for defamation due to ChatGPT’s false claims that he had served time in prison for bribery. This would be the first defamation lawsuit against the text-generating service.

CNET found itself in the midst of controversy after Futurism reported the publication was publishing articles under a mysterious byline completely generated by AI. The private equity company that owns CNET, Red Ventures, was accused of using ChatGPT for SEO farming, even if the information was incorrect.

Several major school systems and colleges, including New York City Public Schools, have banned ChatGPT from their networks and devices. They claim that the AI impedes the learning process by promoting plagiarism and misinformation, a claim that not every educator agrees with.

There have also been cases of ChatGPT accusing individuals of false crimes.

Where can I find examples of ChatGPT prompts?

Several marketplaces host and provide ChatGPT prompts, either for free or for a nominal fee. One is PromptBase. Another is ChatX. More launch every day.

Can ChatGPT be detected?

Poorly. Several tools claim to detect ChatGPT-generated text, but in our tests, they’re inconsistent at best.

Are ChatGPT chats public?

No. But OpenAI recently disclosed a bug, since fixed, that exposed the titles of some users’ conversations to other people on the service.

What lawsuits are there surrounding ChatGPT?

None specifically targeting ChatGPT. But OpenAI is involved in at least one lawsuit that has implications for AI systems trained on publicly available data, which would touch on ChatGPT.

Are there issues regarding plagiarism with ChatGPT?

Yes. Text-generating AI models like ChatGPT have a tendency to regurgitate content from their training data.

You may like

Noticias

Revivir el compromiso en el aula de español: un desafío musical con chatgpt – enfoque de la facultad

Published

10 meses agoon

6 junio, 2025

A mitad del semestre, no es raro notar un cambio en los niveles de energía de sus alumnos (Baghurst y Kelley, 2013; Kumari et al., 2021). El entusiasmo inicial por aprender un idioma extranjero puede disminuir a medida que otros cursos con tareas exigentes compitan por su atención. Algunos estudiantes priorizan las materias que perciben como más directamente vinculadas a su especialidad o carrera, mientras que otros simplemente sienten el peso del agotamiento de mediados de semestre. En la primavera, los largos meses de invierno pueden aumentar esta fatiga, lo que hace que sea aún más difícil mantener a los estudiantes comprometidos (Rohan y Sigmon, 2000).

Este es el momento en que un instructor de idiomas debe pivotar, cambiando la dinámica del aula para reavivar la curiosidad y la motivación. Aunque los instructores se esfuerzan por incorporar actividades que se adapten a los cinco estilos de aprendizaje preferidos (Felder y Henriques, 1995)-Visual (aprendizaje a través de imágenes y comprensión espacial), auditivo (aprendizaje a través de la escucha y discusión), lectura/escritura (aprendizaje a través de interacción basada en texto), Kinesthetic (aprendizaje a través de movimiento y actividades prácticas) y multimodal (una combinación de múltiples estilos)-its is beneficiales). Estructurado y, después de un tiempo, clases predecibles con actividades que rompen el molde. La introducción de algo inesperado y diferente de la dinámica del aula establecida puede revitalizar a los estudiantes, fomentar la creatividad y mejorar su entusiasmo por el aprendizaje.

La música, en particular, ha sido durante mucho tiempo un aliado de instructores que enseñan un segundo idioma (L2), un idioma aprendido después de la lengua nativa, especialmente desde que el campo hizo la transición hacia un enfoque más comunicativo. Arraigado en la interacción y la aplicación del mundo real, el enfoque comunicativo prioriza el compromiso significativo sobre la memorización de memoria, ayudando a los estudiantes a desarrollar fluidez de formas naturales e inmersivas. La investigación ha destacado constantemente los beneficios de la música en la adquisición de L2, desde mejorar la pronunciación y las habilidades de escucha hasta mejorar la retención de vocabulario y la comprensión cultural (DeGrave, 2019; Kumar et al. 2022; Nuessel y Marshall, 2008; Vidal y Nordgren, 2024).

Sobre la base de esta tradición, la actividad que compartiremos aquí no solo incorpora música sino que también integra inteligencia artificial, agregando una nueva capa de compromiso y pensamiento crítico. Al usar la IA como herramienta en el proceso de aprendizaje, los estudiantes no solo se familiarizan con sus capacidades, sino que también desarrollan la capacidad de evaluar críticamente el contenido que genera. Este enfoque los alienta a reflexionar sobre el lenguaje, el significado y la interpretación mientras participan en el análisis de texto, la escritura creativa, la oratoria y la gamificación, todo dentro de un marco interactivo y culturalmente rico.

Descripción de la actividad: Desafío musical con Chatgpt: “Canta y descubre”

Objetivo:

Los estudiantes mejorarán su comprensión auditiva y su producción escrita en español analizando y recreando letras de canciones con la ayuda de ChatGPT. Si bien las instrucciones se presentan aquí en inglés, la actividad debe realizarse en el idioma de destino, ya sea que se enseñe el español u otro idioma.

Instrucciones:

1. Escuche y decodifique

- Divida la clase en grupos de 2-3 estudiantes.

- Elija una canción en español (por ejemplo, La Llorona por chavela vargas, Oye CÓMO VA por Tito Puente, Vivir mi Vida por Marc Anthony).

- Proporcione a cada grupo una versión incompleta de la letra con palabras faltantes.

- Los estudiantes escuchan la canción y completan los espacios en blanco.

2. Interpretar y discutir

- Dentro de sus grupos, los estudiantes analizan el significado de la canción.

- Discuten lo que creen que transmiten las letras, incluidas las emociones, los temas y cualquier referencia cultural que reconocan.

- Cada grupo comparte su interpretación con la clase.

- ¿Qué crees que la canción está tratando de comunicarse?

- ¿Qué emociones o sentimientos evocan las letras para ti?

- ¿Puedes identificar alguna referencia cultural en la canción? ¿Cómo dan forma a su significado?

- ¿Cómo influye la música (melodía, ritmo, etc.) en su interpretación de la letra?

- Cada grupo comparte su interpretación con la clase.

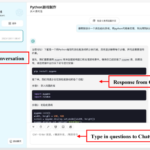

3. Comparar con chatgpt

- Después de formar su propio análisis, los estudiantes preguntan a Chatgpt:

- ¿Qué crees que la canción está tratando de comunicarse?

- ¿Qué emociones o sentimientos evocan las letras para ti?

- Comparan la interpretación de ChatGPT con sus propias ideas y discuten similitudes o diferencias.

4. Crea tu propio verso

- Cada grupo escribe un nuevo verso que coincide con el estilo y el ritmo de la canción.

- Pueden pedirle ayuda a ChatGPT: “Ayúdanos a escribir un nuevo verso para esta canción con el mismo estilo”.

5. Realizar y cantar

- Cada grupo presenta su nuevo verso a la clase.

- Si se sienten cómodos, pueden cantarlo usando la melodía original.

- Es beneficioso que el profesor tenga una versión de karaoke (instrumental) de la canción disponible para que las letras de los estudiantes se puedan escuchar claramente.

- Mostrar las nuevas letras en un monitor o proyector permite que otros estudiantes sigan y canten juntos, mejorando la experiencia colectiva.

6. Elección – El Grammy va a

Los estudiantes votan por diferentes categorías, incluyendo:

- Mejor adaptación

- Mejor reflexión

- Mejor rendimiento

- Mejor actitud

- Mejor colaboración

7. Reflexión final

- ¿Cuál fue la parte más desafiante de comprender la letra?

- ¿Cómo ayudó ChatGPT a interpretar la canción?

- ¿Qué nuevas palabras o expresiones aprendiste?

Pensamientos finales: música, IA y pensamiento crítico

Un desafío musical con Chatgpt: “Canta y descubre” (Desafío Musical Con Chatgpt: “Cantar y Descubrir”) es una actividad que he encontrado que es especialmente efectiva en mis cursos intermedios y avanzados. Lo uso cuando los estudiantes se sienten abrumados o distraídos, a menudo alrededor de los exámenes parciales, como una forma de ayudarlos a relajarse y reconectarse con el material. Sirve como un descanso refrescante, lo que permite a los estudiantes alejarse del estrés de las tareas y reenfocarse de una manera divertida e interactiva. Al incorporar música, creatividad y tecnología, mantenemos a los estudiantes presentes en la clase, incluso cuando todo lo demás parece exigir su atención.

Más allá de ofrecer una pausa bien merecida, esta actividad provoca discusiones atractivas sobre la interpretación del lenguaje, el contexto cultural y el papel de la IA en la educación. A medida que los estudiantes comparan sus propias interpretaciones de las letras de las canciones con las generadas por ChatGPT, comienzan a reconocer tanto el valor como las limitaciones de la IA. Estas ideas fomentan el pensamiento crítico, ayudándoles a desarrollar un enfoque más maduro de la tecnología y su impacto en su aprendizaje.

Agregar el elemento de karaoke mejora aún más la experiencia, dando a los estudiantes la oportunidad de realizar sus nuevos versos y divertirse mientras practica sus habilidades lingüísticas. Mostrar la letra en una pantalla hace que la actividad sea más inclusiva, lo que permite a todos seguirlo. Para hacerlo aún más agradable, seleccionando canciones que resuenen con los gustos de los estudiantes, ya sea un clásico como La Llorona O un éxito contemporáneo de artistas como Bad Bunny, Selena, Daddy Yankee o Karol G, hace que la actividad se sienta más personal y atractiva.

Esta actividad no se limita solo al aula. Es una gran adición a los clubes españoles o eventos especiales, donde los estudiantes pueden unirse a un amor compartido por la música mientras practican sus habilidades lingüísticas. Después de todo, ¿quién no disfruta de una buena parodia de su canción favorita?

Mezclar el aprendizaje de idiomas con música y tecnología, Desafío Musical Con Chatgpt Crea un entorno dinámico e interactivo que revitaliza a los estudiantes y profundiza su conexión con el lenguaje y el papel evolutivo de la IA. Convierte los momentos de agotamiento en oportunidades de creatividad, exploración cultural y entusiasmo renovado por el aprendizaje.

Angela Rodríguez Mooney, PhD, es profesora asistente de español y la Universidad de Mujeres de Texas.

Referencias

Baghurst, Timothy y Betty C. Kelley. “Un examen del estrés en los estudiantes universitarios en el transcurso de un semestre”. Práctica de promoción de la salud 15, no. 3 (2014): 438-447.

DeGrave, Pauline. “Música en el aula de idiomas extranjeros: cómo y por qué”. Revista de Enseñanza e Investigación de Lenguas 10, no. 3 (2019): 412-420.

Felder, Richard M. y Eunice R. Henriques. “Estilos de aprendizaje y enseñanza en la educación extranjera y de segundo idioma”. Anales de idiomas extranjeros 28, no. 1 (1995): 21-31.

Nuessel, Frank y April D. Marshall. “Prácticas y principios para involucrar a los tres modos comunicativos en español a través de canciones y música”. Hispania (2008): 139-146.

Kumar, Tribhuwan, Shamim Akhter, Mehrunnisa M. Yunus y Atefeh Shamsy. “Uso de la música y las canciones como herramientas pedagógicas en la enseñanza del inglés como contextos de idiomas extranjeros”. Education Research International 2022, no. 1 (2022): 1-9

Noticias

5 indicaciones de chatgpt que pueden ayudar a los adolescentes a lanzar una startup

Published

10 meses agoon

5 junio, 2025

Teen emprendedor que usa chatgpt para ayudarlo con su negocio

El emprendimiento adolescente sigue en aumento. Según Junior Achievement Research, el 66% de los adolescentes estadounidenses de entre 13 y 17 años dicen que es probable que considere comenzar un negocio como adultos, con el monitor de emprendimiento global 2023-2024 que encuentra que el 24% de los jóvenes de 18 a 24 años son actualmente empresarios. Estos jóvenes fundadores no son solo soñando, están construyendo empresas reales que generan ingresos y crean un impacto social, y están utilizando las indicaciones de ChatGPT para ayudarlos.

En Wit (lo que sea necesario), la organización que fundó en 2009, hemos trabajado con más de 10,000 jóvenes empresarios. Durante el año pasado, he observado un cambio en cómo los adolescentes abordan la planificación comercial. Con nuestra orientación, están utilizando herramientas de IA como ChatGPT, no como atajos, sino como socios de pensamiento estratégico para aclarar ideas, probar conceptos y acelerar la ejecución.

Los emprendedores adolescentes más exitosos han descubierto indicaciones específicas que los ayudan a pasar de una idea a otra. Estas no son sesiones genéricas de lluvia de ideas: están utilizando preguntas específicas que abordan los desafíos únicos que enfrentan los jóvenes fundadores: recursos limitados, compromisos escolares y la necesidad de demostrar sus conceptos rápidamente.

Aquí hay cinco indicaciones de ChatGPT que ayudan constantemente a los emprendedores adolescentes a construir negocios que importan.

1. El problema del primer descubrimiento chatgpt aviso

“Me doy cuenta de que [specific group of people]

luchar contra [specific problem I’ve observed]. Ayúdame a entender mejor este problema explicando: 1) por qué existe este problema, 2) qué soluciones existen actualmente y por qué son insuficientes, 3) cuánto las personas podrían pagar para resolver esto, y 4) tres formas específicas en que podría probar si este es un problema real que vale la pena resolver “.

Un adolescente podría usar este aviso después de notar que los estudiantes en la escuela luchan por pagar el almuerzo. En lugar de asumir que entienden el alcance completo, podrían pedirle a ChatGPT que investigue la deuda del almuerzo escolar como un problema sistémico. Esta investigación puede llevarlos a crear un negocio basado en productos donde los ingresos ayuden a pagar la deuda del almuerzo, lo que combina ganancias con el propósito.

Los adolescentes notan problemas de manera diferente a los adultos porque experimentan frustraciones únicas, desde los desafíos de las organizaciones escolares hasta las redes sociales hasta las preocupaciones ambientales. Según la investigación de Square sobre empresarios de la Generación de la Generación Z, el 84% planea ser dueños de negocios dentro de cinco años, lo que los convierte en candidatos ideales para las empresas de resolución de problemas.

2. El aviso de chatgpt de chatgpt de chatgpt de realidad de la realidad del recurso

“Soy [age] años con aproximadamente [dollar amount] invertir y [number] Horas por semana disponibles entre la escuela y otros compromisos. Según estas limitaciones, ¿cuáles son tres modelos de negocio que podría lanzar de manera realista este verano? Para cada opción, incluya costos de inicio, requisitos de tiempo y los primeros tres pasos para comenzar “.

Este aviso se dirige al elefante en la sala: la mayoría de los empresarios adolescentes tienen dinero y tiempo limitados. Cuando un empresario de 16 años emplea este enfoque para evaluar un concepto de negocio de tarjetas de felicitación, puede descubrir que pueden comenzar con $ 200 y escalar gradualmente. Al ser realistas sobre las limitaciones por adelantado, evitan el exceso de compromiso y pueden construir hacia objetivos de ingresos sostenibles.

Según el informe de Gen Z de Square, el 45% de los jóvenes empresarios usan sus ahorros para iniciar negocios, con el 80% de lanzamiento en línea o con un componente móvil. Estos datos respaldan la efectividad de la planificación basada en restricciones: cuando funcionan los adolescentes dentro de las limitaciones realistas, crean modelos comerciales más sostenibles.

3. El aviso de chatgpt del simulador de voz del cliente

“Actúa como un [specific demographic] Y dame comentarios honestos sobre esta idea de negocio: [describe your concept]. ¿Qué te excitaría de esto? ¿Qué preocupaciones tendrías? ¿Cuánto pagarías de manera realista? ¿Qué necesitaría cambiar para que se convierta en un cliente? “

Los empresarios adolescentes a menudo luchan con la investigación de los clientes porque no pueden encuestar fácilmente a grandes grupos o contratar firmas de investigación de mercado. Este aviso ayuda a simular los comentarios de los clientes haciendo que ChatGPT adopte personas específicas.

Un adolescente que desarrolla un podcast para atletas adolescentes podría usar este enfoque pidiéndole a ChatGPT que responda a diferentes tipos de atletas adolescentes. Esto ayuda a identificar temas de contenido que resuenan y mensajes que se sienten auténticos para el público objetivo.

El aviso funciona mejor cuando se vuelve específico sobre la demografía, los puntos débiles y los contextos. “Actúa como un estudiante de último año de secundaria que solicita a la universidad” produce mejores ideas que “actuar como un adolescente”.

4. El mensaje mínimo de diseñador de prueba viable chatgpt

“Quiero probar esta idea de negocio: [describe concept] sin gastar más de [budget amount] o más de [time commitment]. Diseñe tres experimentos simples que podría ejecutar esta semana para validar la demanda de los clientes. Para cada prueba, explique lo que aprendería, cómo medir el éxito y qué resultados indicarían que debería avanzar “.

Este aviso ayuda a los adolescentes a adoptar la metodología Lean Startup sin perderse en la jerga comercial. El enfoque en “This Week” crea urgencia y evita la planificación interminable sin acción.

Un adolescente que desea probar un concepto de línea de ropa podría usar este indicador para diseñar experimentos de validación simples, como publicar maquetas de diseño en las redes sociales para evaluar el interés, crear un formulario de Google para recolectar pedidos anticipados y pedirles a los amigos que compartan el concepto con sus redes. Estas pruebas no cuestan nada más que proporcionar datos cruciales sobre la demanda y los precios.

5. El aviso de chatgpt del generador de claridad de tono

“Convierta esta idea de negocio en una clara explicación de 60 segundos: [describe your business]. La explicación debe incluir: el problema que resuelve, su solución, a quién ayuda, por qué lo elegirían sobre las alternativas y cómo se ve el éxito. Escríbelo en lenguaje de conversación que un adolescente realmente usaría “.

La comunicación clara separa a los empresarios exitosos de aquellos con buenas ideas pero una ejecución deficiente. Este aviso ayuda a los adolescentes a destilar conceptos complejos a explicaciones convincentes que pueden usar en todas partes, desde las publicaciones en las redes sociales hasta las conversaciones con posibles mentores.

El énfasis en el “lenguaje de conversación que un adolescente realmente usaría” es importante. Muchas plantillas de lanzamiento comercial suenan artificiales cuando se entregan jóvenes fundadores. La autenticidad es más importante que la jerga corporativa.

Más allá de las indicaciones de chatgpt: estrategia de implementación

La diferencia entre los adolescentes que usan estas indicaciones de manera efectiva y aquellos que no se reducen a seguir. ChatGPT proporciona dirección, pero la acción crea resultados.

Los jóvenes empresarios más exitosos con los que trabajo usan estas indicaciones como puntos de partida, no de punto final. Toman las sugerencias generadas por IA e inmediatamente las prueban en el mundo real. Llaman a clientes potenciales, crean prototipos simples e iteran en función de los comentarios reales.

Investigaciones recientes de Junior Achievement muestran que el 69% de los adolescentes tienen ideas de negocios, pero se sienten inciertos sobre el proceso de partida, con el miedo a que el fracaso sea la principal preocupación para el 67% de los posibles empresarios adolescentes. Estas indicaciones abordan esa incertidumbre al desactivar los conceptos abstractos en los próximos pasos concretos.

La imagen más grande

Los emprendedores adolescentes que utilizan herramientas de IA como ChatGPT representan un cambio en cómo está ocurriendo la educación empresarial. Según la investigación mundial de monitores empresariales, los jóvenes empresarios tienen 1,6 veces más probabilidades que los adultos de querer comenzar un negocio, y son particularmente activos en la tecnología, la alimentación y las bebidas, la moda y los sectores de entretenimiento. En lugar de esperar clases de emprendimiento formales o programas de MBA, estos jóvenes fundadores están accediendo a herramientas de pensamiento estratégico de inmediato.

Esta tendencia se alinea con cambios más amplios en la educación y la fuerza laboral. El Foro Económico Mundial identifica la creatividad, el pensamiento crítico y la resiliencia como las principales habilidades para 2025, la capacidad de las capacidades que el espíritu empresarial desarrolla naturalmente.

Programas como WIT brindan soporte estructurado para este viaje, pero las herramientas en sí mismas se están volviendo cada vez más accesibles. Un adolescente con acceso a Internet ahora puede acceder a recursos de planificación empresarial que anteriormente estaban disponibles solo para empresarios establecidos con presupuestos significativos.

La clave es usar estas herramientas cuidadosamente. ChatGPT puede acelerar el pensamiento y proporcionar marcos, pero no puede reemplazar el arduo trabajo de construir relaciones, crear productos y servir a los clientes. La mejor idea de negocio no es la más original, es la que resuelve un problema real para personas reales. Las herramientas de IA pueden ayudar a identificar esas oportunidades, pero solo la acción puede convertirlos en empresas que importan.

Noticias

Chatgpt vs. gemini: he probado ambos, y uno definitivamente es mejor

Published

10 meses agoon

5 junio, 2025

Precio

ChatGPT y Gemini tienen versiones gratuitas que limitan su acceso a características y modelos. Los planes premium para ambos también comienzan en alrededor de $ 20 por mes. Las características de chatbot, como investigaciones profundas, generación de imágenes y videos, búsqueda web y más, son similares en ChatGPT y Gemini. Sin embargo, los planes de Gemini pagados también incluyen el almacenamiento en la nube de Google Drive (a partir de 2TB) y un conjunto robusto de integraciones en las aplicaciones de Google Workspace.

Los niveles de más alta gama de ChatGPT y Gemini desbloquean el aumento de los límites de uso y algunas características únicas, pero el costo mensual prohibitivo de estos planes (como $ 200 para Chatgpt Pro o $ 250 para Gemini Ai Ultra) los pone fuera del alcance de la mayoría de las personas. Las características específicas del plan Pro de ChatGPT, como el modo O1 Pro que aprovecha el poder de cálculo adicional para preguntas particularmente complicadas, no son especialmente relevantes para el consumidor promedio, por lo que no sentirá que se está perdiendo. Sin embargo, es probable que desee las características que son exclusivas del plan Ai Ultra de Gemini, como la generación de videos VEO 3.

Ganador: Géminis

Plataformas

Puede acceder a ChatGPT y Gemini en la web o a través de aplicaciones móviles (Android e iOS). ChatGPT también tiene aplicaciones de escritorio (macOS y Windows) y una extensión oficial para Google Chrome. Gemini no tiene aplicaciones de escritorio dedicadas o una extensión de Chrome, aunque se integra directamente con el navegador.

(Crédito: OpenAI/PCMAG)

Chatgpt está disponible en otros lugares, Como a través de Siri. Como se mencionó, puede acceder a Gemini en las aplicaciones de Google, como el calendario, Documento, ConducirGmail, Mapas, Mantener, FotosSábanas, y Música de YouTube. Tanto los modelos de Chatgpt como Gemini también aparecen en sitios como la perplejidad. Sin embargo, obtiene la mayor cantidad de funciones de estos chatbots en sus aplicaciones y portales web dedicados.

Las interfaces de ambos chatbots son en gran medida consistentes en todas las plataformas. Son fáciles de usar y no lo abruman con opciones y alternar. ChatGPT tiene algunas configuraciones más para jugar, como la capacidad de ajustar su personalidad, mientras que la profunda interfaz de investigación de Gemini hace un mejor uso de los bienes inmuebles de pantalla.

Ganador: empate

Modelos de IA

ChatGPT tiene dos series primarias de modelos, la serie 4 (su línea de conversación, insignia) y la Serie O (su compleja línea de razonamiento). Gemini ofrece de manera similar una serie Flash de uso general y una serie Pro para tareas más complicadas.

Los últimos modelos de Chatgpt son O3 y O4-Mini, y los últimos de Gemini son 2.5 Flash y 2.5 Pro. Fuera de la codificación o la resolución de una ecuación, pasará la mayor parte de su tiempo usando los modelos de la serie 4-Series y Flash. A continuación, puede ver cómo funcionan estos modelos en una variedad de tareas. Qué modelo es mejor depende realmente de lo que quieras hacer.

Ganador: empate

Búsqueda web

ChatGPT y Gemini pueden buscar información actualizada en la web con facilidad. Sin embargo, ChatGPT presenta mosaicos de artículos en la parte inferior de sus respuestas para una lectura adicional, tiene un excelente abastecimiento que facilita la vinculación de reclamos con evidencia, incluye imágenes en las respuestas cuando es relevante y, a menudo, proporciona más detalles en respuesta. Gemini no muestra nombres de fuente y títulos de artículos completos, e incluye mosaicos e imágenes de artículos solo cuando usa el modo AI de Google. El abastecimiento en este modo es aún menos robusto; Google relega las fuentes a los caretes que se pueden hacer clic que no resaltan las partes relevantes de su respuesta.

Como parte de sus experiencias de búsqueda en la web, ChatGPT y Gemini pueden ayudarlo a comprar. Si solicita consejos de compra, ambos presentan mosaicos haciendo clic en enlaces a los minoristas. Sin embargo, Gemini generalmente sugiere mejores productos y tiene una característica única en la que puede cargar una imagen tuya para probar digitalmente la ropa antes de comprar.

Ganador: chatgpt

Investigación profunda

ChatGPT y Gemini pueden generar informes que tienen docenas de páginas e incluyen más de 50 fuentes sobre cualquier tema. La mayor diferencia entre los dos se reduce al abastecimiento. Gemini a menudo cita más fuentes que CHATGPT, pero maneja el abastecimiento en informes de investigación profunda de la misma manera que lo hace en la búsqueda en modo AI, lo que significa caretas que se puede hacer clic sin destacados en el texto. Debido a que es más difícil conectar las afirmaciones en los informes de Géminis a fuentes reales, es más difícil creerles. El abastecimiento claro de ChatGPT con destacados en el texto es más fácil de confiar. Sin embargo, Gemini tiene algunas características de calidad de vida en ChatGPT, como la capacidad de exportar informes formateados correctamente a Google Docs con un solo clic. Su tono también es diferente. Los informes de ChatGPT se leen como publicaciones de foro elaboradas, mientras que los informes de Gemini se leen como documentos académicos.

Ganador: chatgpt

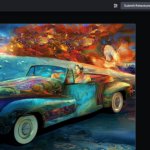

Generación de imágenes

La generación de imágenes de ChatGPT impresiona independientemente de lo que solicite, incluso las indicaciones complejas para paneles o diagramas cómicos. No es perfecto, pero los errores y la distorsión son mínimos. Gemini genera imágenes visualmente atractivas más rápido que ChatGPT, pero rutinariamente incluyen errores y distorsión notables. Con indicaciones complicadas, especialmente diagramas, Gemini produjo resultados sin sentido en las pruebas.

Arriba, puede ver cómo ChatGPT (primera diapositiva) y Géminis (segunda diapositiva) les fue con el siguiente mensaje: “Genere una imagen de un estudio de moda con una decoración simple y rústica que contrasta con el espacio más agradable. Incluya un sofá marrón y paredes de ladrillo”. La imagen de ChatGPT limita los problemas al detalle fino en las hojas de sus plantas y texto en su libro, mientras que la imagen de Gemini muestra problemas más notables en su tubo de cordón y lámpara.

Ganador: chatgpt

¡Obtenga nuestras mejores historias!

Toda la última tecnología, probada por nuestros expertos

Regístrese en el boletín de informes de laboratorio para recibir las últimas revisiones de productos de PCMAG, comprar asesoramiento e ideas.

Al hacer clic en Registrarme, confirma que tiene más de 16 años y acepta nuestros Términos de uso y Política de privacidad.

¡Gracias por registrarse!

Su suscripción ha sido confirmada. ¡Esté atento a su bandeja de entrada!

Generación de videos

La generación de videos de Gemini es la mejor de su clase, especialmente porque ChatGPT no puede igualar su capacidad para producir audio acompañante. Actualmente, Google bloquea el último modelo de generación de videos de Gemini, VEO 3, detrás del costoso plan AI Ultra, pero obtienes más videos realistas que con ChatGPT. Gemini también tiene otras características que ChatGPT no, como la herramienta Flow Filmmaker, que le permite extender los clips generados y el animador AI Whisk, que le permite animar imágenes fijas. Sin embargo, tenga en cuenta que incluso con VEO 3, aún necesita generar videos varias veces para obtener un gran resultado.

En el ejemplo anterior, solicité a ChatGPT y Gemini a mostrarme un solucionador de cubos de Rubik Rubik que resuelva un cubo. La persona en el video de Géminis se ve muy bien, y el audio acompañante es competente. Al final, hay una buena atención al detalle con el marco que se desplaza, simulando la detención de una grabación de selfies. Mientras tanto, Chatgpt luchó con su cubo, distorsionándolo en gran medida.

Ganador: Géminis

Procesamiento de archivos

Comprender los archivos es una fortaleza de ChatGPT y Gemini. Ya sea que desee que respondan preguntas sobre un manual, editen un currículum o le informen algo sobre una imagen, ninguno decepciona. Sin embargo, ChatGPT tiene la ventaja sobre Gemini, ya que ofrece un reconocimiento de imagen ligeramente mejor y respuestas más detalladas cuando pregunta sobre los archivos cargados. Ambos chatbots todavía a veces inventan citas de documentos proporcionados o malinterpretan las imágenes, así que asegúrese de verificar sus resultados.

Ganador: chatgpt

Escritura creativa

Chatgpt y Gemini pueden generar poemas, obras, historias y más competentes. CHATGPT, sin embargo, se destaca entre los dos debido a cuán únicas son sus respuestas y qué tan bien responde a las indicaciones. Las respuestas de Gemini pueden sentirse repetitivas si no calibra cuidadosamente sus solicitudes, y no siempre sigue todas las instrucciones a la carta.

En el ejemplo anterior, solicité ChatGPT (primera diapositiva) y Gemini (segunda diapositiva) con lo siguiente: “Sin hacer referencia a nada en su memoria o respuestas anteriores, quiero que me escriba un poema de verso gratuito. Preste atención especial a la capitalización, enjambment, ruptura de línea y puntuación. Dado que es un verso libre, no quiero un medidor familiar o un esquema de retiro de la rima, pero quiero que tenga un estilo de coohes. ChatGPT logró entregar lo que pedí en el aviso, y eso era distinto de las generaciones anteriores. Gemini tuvo problemas para generar un poema que incorporó cualquier cosa más allá de las comas y los períodos, y su poema anterior se lee de manera muy similar a un poema que generó antes.

Recomendado por nuestros editores

Ganador: chatgpt

Razonamiento complejo

Los modelos de razonamiento complejos de Chatgpt y Gemini pueden manejar preguntas de informática, matemáticas y física con facilidad, así como mostrar de manera competente su trabajo. En las pruebas, ChatGPT dio respuestas correctas un poco más a menudo que Gemini, pero su rendimiento es bastante similar. Ambos chatbots pueden y le darán respuestas incorrectas, por lo que verificar su trabajo aún es vital si está haciendo algo importante o tratando de aprender un concepto.

Ganador: chatgpt

Integración

ChatGPT no tiene integraciones significativas, mientras que las integraciones de Gemini son una característica definitoria. Ya sea que desee obtener ayuda para editar un ensayo en Google Docs, comparta una pestaña Chrome para hacer una pregunta, pruebe una nueva lista de reproducción de música de YouTube personalizada para su gusto o desbloquee ideas personales en Gmail, Gemini puede hacer todo y mucho más. Es difícil subestimar cuán integrales y poderosas son realmente las integraciones de Géminis.

Ganador: Géminis

Asistentes de IA

ChatGPT tiene GPT personalizados, y Gemini tiene gemas. Ambos son asistentes de IA personalizables. Tampoco es una gran actualización sobre hablar directamente con los chatbots, pero los GPT personalizados de terceros agregan una nueva funcionalidad, como el fácil acceso a Canva para editar imágenes generadas. Mientras tanto, terceros no pueden crear gemas, y no puedes compartirlas. Puede permitir que los GPT personalizados accedan a la información externa o tomen acciones externas, pero las GEM no tienen una funcionalidad similar.

Ganador: chatgpt

Contexto Windows y límites de uso

La ventana de contexto de ChatGPT sube a 128,000 tokens en sus planes de nivel superior, y todos los planes tienen límites de uso dinámicos basados en la carga del servidor. Géminis, por otro lado, tiene una ventana de contexto de 1,000,000 token. Google no está demasiado claro en los límites de uso exactos para Gemini, pero también son dinámicos dependiendo de la carga del servidor. Anecdóticamente, no pude alcanzar los límites de uso usando los planes pagados de Chatgpt o Gemini, pero es mucho más fácil hacerlo con los planes gratuitos.

Ganador: Géminis

Privacidad

La privacidad en Chatgpt y Gemini es una bolsa mixta. Ambos recopilan cantidades significativas de datos, incluidos todos sus chats, y usan esos datos para capacitar a sus modelos de IA de forma predeterminada. Sin embargo, ambos le dan la opción de apagar el entrenamiento. Google al menos no recopila y usa datos de Gemini para fines de capacitación en aplicaciones de espacio de trabajo, como Gmail, de forma predeterminada. ChatGPT y Gemini también prometen no vender sus datos o usarlos para la orientación de anuncios, pero Google y OpenAI tienen historias sórdidas cuando se trata de hacks, filtraciones y diversos fechorías digitales, por lo que recomiendo no compartir nada demasiado sensible.

Ganador: empate

Related posts

Trending

-

Startups2 años ago

Startups2 años agoRemove.bg: La Revolución en la Edición de Imágenes que Debes Conocer

-

Tutoriales2 años ago

Tutoriales2 años agoCómo Comenzar a Utilizar ChatGPT: Una Guía Completa para Principiantes

-

Startups2 años ago

Startups2 años agoDeepgram: Revolucionando el Reconocimiento de Voz con IA

-

Startups2 años ago

Startups2 años agoStartups de IA en EE.UU. que han recaudado más de $100M en 2024

-

Recursos2 años ago

Recursos2 años agoCómo Empezar con Popai.pro: Tu Espacio Personal de IA – Guía Completa, Instalación, Versiones y Precios

-

Recursos2 años ago

Recursos2 años agoPerplexity aplicado al Marketing Digital y Estrategias SEO

-

Estudiar IA2 años ago

Estudiar IA2 años agoCurso de Inteligencia Artificial Aplicada de 4Geeks Academy 2024

-

Noticias2 años ago

Noticias2 años agoDos periodistas octogenarios deman a ChatGPT por robar su trabajo