Noticias

Sam Altman Reveals This Prior Flaw In OpenAI Advanced AI o1 During ChatGPT Pro Announcement But Nobody Seemed To Widely Notice

Published

1 año agoon

A hidden flaw or inconvenience in OpenAI o1 got recently aired and though fixed it raises … [+] significant considerations about present-day and future AI.

In today’s column, I examine a hidden flaw in OpenAI’s advanced o1 AI model that Sam Altman revealed during the recent “12 Days Of OpenAI” video-streamed ChatGPT Pro announcement. His acknowledgment of the flaw was not especially noted in the media since he covered it quite nonchalantly in a subtle hand-waving fashion and claimed too that it was now fixed. Whether the flaw or some contend “inconvenience” was even worthy of consideration is another intriguing facet that gives pause for thought about the current state of AI and how far or close we are to the attainment of artificial general intelligence (AGI).

Let’s talk about it.

This analysis of an innovative proposition is part of my ongoing Forbes column coverage on the latest in AI including identifying and explaining various impactful AI complexities (see the link here). For my analysis of the key features and vital advancements in the OpenAI o1 AI model, see the link here and the link here, covering various aspects such as chain-of-thought reasoning, reinforcement learning, and the like.

How Humans Respond To Fellow Humans

Before I delve into the meat and potatoes of the matter, a brief foundational-setting treatise might be in order.

When you converse with a fellow human, you normally expect them to timely respond as based on the nature of the conversation. For example, if you say “hello” to someone, the odds are that you expect them to respond rather quickly with a dutiful reply such as hello, hey, howdy, etc. There shouldn’t be much of a delay in such a perfunctory response. It’s a no-brainer, as they say.

On the other hand, if you ask someone to explain the meaning of life, the odds are that any seriously studious response will start after the person has ostensibly put their thoughts into order. They would presumably give in-depth consideration to the nature of human existence, including our place in the universe, and otherwise assemble a well-thought-out answer. This assumes that the question was asked in all seriousness and that the respondent is aiming to reply in all seriousness.

The gist is that the time to respond will tend to depend on the proffered remark or question.

A presented simple comment or remark involving no weighty question or arduous heaviness ought to get a fast response. The responding person doesn’t need to engage in much mental exertion in such instances. You get a near-immediate response. If the presented utterance has more substance to it, we will reasonably allow time for the other person to undertake a judicious reflective moment. A delay in responding is perfectly fine and fully expected in that case.

That is the usual cadence of human-to-human discourse.

Off-Cadence Timing Of Advanced o1 AI

For those that had perchance made use of the OpenAI o1 AI advanced model, you might have noticed something that was outside of the cadence that I just mentioned. The human-to-AI cadence bordered on being curious and possibly annoying.

The deal was this.

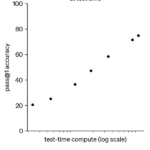

You were suitably forewarned when using o1 that to get the more in-depth answers there would be more extended time after entering a prompt and before getting a response from the AI. Wait time went up. This has to do with the internally added capabilities of advanced AI functionality including chain-of-thought reasoning, reinforcement learning, and so on, see my explanation at the link here. The response latency time had significantly increased.

Whereas in earlier and less advanced generative AI and LLMs we had all gotten used to near instantaneous responses, by and large, there was a willingness to wait longer to get more deeply mined responses via advanced o1 AI. That seems like a fair tradeoff. People will wait longer if they can get better answers. They won’t wait longer if the answers aren’t going to be better than when the response time was quicker.

You can think of this speed-of-response as akin to playing chess. The opening move of a chess game is usually like a flash. Each side quickly makes their initial move and countermove. Later in the game, the time to respond is bound to slow down as each player puts concentrated thoughts into the matter. Just about everyone experiences that expected cadence when playing chess.

What was o1 doing in terms of cadence?

Aha, you might have noticed that when you gave o1 a simple prompt, including even merely saying hello, the AI took about as much time to respond as when answering an extremely complex question. In other words, the response time was roughly the same for the simplest of prompts and the most complicated and deep-diving fully answered responses.

It was a puzzling phenomenon and didn’t conform to any reasonable human-to-AI experience expected cadence.

In coarser language, that dog don’t hunt.

Examples Of What This Cadence Was Like

As an illustrative scenario, consider two prompts, one that ought to be quickly responded to and the other that fairly we would allow more time to see a reply.

First, a simple prompt that ought to lead to a simple and quick response.

- My entered prompt: “Hi.”

- Generative AI response: “Hello, how can I help you?”

The time between the prompt and the response was about 10 seconds.

Next, I’ll try a beefy prompt.

- My entered prompt: “Tell me how all of existence first began, covering all known theories.”

- Generative AI response: “Here is a summary of all available theories on the topic…”

The time for the AI to generate a response to that beefier question was about 12 seconds.

I think we can agree that the first and extremely simple prompt should have had a response time of just a few seconds at most. The response time shouldn’t be nearly the same as when responding to the question about all of human existence. Yet, it was.

Something is clearly amiss.

But you probably wouldn’t have complained since the aspect that you could get in-depth answers was worth the irritating and eyebrow-raising length of wait time for the simpler prompts. I dare say most users just shrugged their shoulders and figured it was somehow supposed to work that way.

Sam Altman Mentioned That This Has Been Fixed

During the ChatGPT Pro announcement, Sam Altman brought up the somewhat sticky matter and noted that the issue had been fixed. Thus, you presumably should henceforth expect a fast response time to simple prompts. And, as already reasonably expected, only prompts requiring greater intensity of computational effort ought to take up longer response times.

That’s how the world is supposed to work. The universe has been placed back into proper balance. Hooray, yet another problem solved.

Few seemed to catch onto his offhand commentary on the topic. Media coverage pretty much skipped past that portion and went straight to the more exciting pronouncements. The whole thing about the response times was likely perceived as a non-issue and not worthy of talking about.

Well, for reasons I’m about to unpack, I think it is worthy to ruminate on.

Turns out there is a lot more to this than perhaps meets the eye. It is a veritable gold mine of intertwining considerations about the nature of contemporary AI and the future of AI. That being said, I certainly don’t want to make a mountain out of a molehill, but nor should we let this opportune moment pass without closely inspecting the gold nuggets that were fortuitously revealed.

Go down the rabbit hole with me, if you please.

Possible Ways In Which This Happened

Let’s take a moment to examine various ways in which the off-balance cadence in the human-to-AI interaction might have arisen. OpenAI considers their AI to be proprietary and they don’t reveal the innermost secrets, ergo I’ll have to put on my AI-analysis detective hat and do some outside-the-box sleuthing.

First, the easiest way to explain things is that an AI maker might decide to hold back all responses until some timer says to release the response.

Why do this?

A rationalization is that the AI maker wants all responses to come out roughly on the same cadence. For example, even if a response has been computationally determined in say 2 seconds, the AI is instructed to keep the response at bay until the time reaches say 10 seconds.

I think you can see how this works out to a seemingly even cadence. A tough-to-answer query might require 12 entire seconds. The response wasn’t ready until after the timer was done. That’s fine. At that juncture, you show the user the response. Only when a response takes less than the time limit will the AI hold back the response.

In the end, the user would get used to seeing all responses arising at above 10 seconds and fall into a mental haze that no matter what happens, they will need to wait at least that long to see a response. Boom, the user is essentially being behaviorally trained to accept that responses will take that threshold of time. They don’t know they are being trained. Nothing tips them to this ruse.

Best of all, from the AI maker’s perspective, no one will get upset about timing since nothing ever happens sooner than the hidden limit anyway. Elegant and the users are never cognizant of the under-the-hood trickery.

The Gig Won’t Last And Questions Will Be Asked

The danger for the AI maker comes to the fore when software sophisticates start to question the delays. Any proficient software developer or AI specialist would right away be suspicious that the simplest of entries is causing lengthy latency. It’s not a good look. Insiders begin to ask what’s up with that.

If a fake time limit is being used, that’s often frowned upon by insiders who would shame those developers undertaking such an unseemly route. There isn’t anything wrong per se. It is more of a considered low-brow or discreditable act. Just not part of the virtuous coding sense of ethos.

I am going to cross out that culprit and move toward a presumably more likely suspect.

It goes like this.

I refer to this other possibility as the gauntlet walk.

A brief tale will suffice as illumination. Imagine that you went to the DMV to get up-to-date license tags for your car. In theory, if all the paperwork is already done, all you need to do is show your ID and they will hand you the tags. Some modernized DMVs have an automated kiosk in the lobby that dispenses tags so that you can just scan your ID and viola, you instantly get your tags and walk right out the door. Happy face.

Sadly, some DMVs are not yet modernized. They treat all requests the same and make you wait as though you were there to have surgery done. You check in at one window. They tell you to wait over there. Your name is called, and you go to a pre-processing window. The agent then tells you to wait in a different spot until your name is once again called. At the next processing window, they do some of the paperwork but not all of it. On and on this goes.

The upshot is that no matter what your request consists of you are by-gosh going to walk the full gauntlet. Tough luck to you. Live with it.

A generative AI app or large language model (LLM) could be devised similarly. No matter what the prompt contains, an entire gauntlet of steps is going to occur. Everything must endure all the steps. Period, end of story.

In that case, you would typically have responses arriving outbound at roughly the same time. This could vary somewhat because the internal machinery such as the chain of thought mechanism is going to pass through the tokens without having to do nearly the same amount of computational work, see my explanation at the link here. Nonetheless, time is consumed even when the content is being merely shunted along.

That could account for the simplest of prompts taking much longer than we expect them to take.

How It Happens Is A Worthy Question

Your immediate thought might be why in the heck would a generative AI app or LLM be devised to treat all prompts as though they must walk the full gauntlet. This doesn’t seem to pass the smell test. It would seem obvious that a fast path like at Disneyland should be available for prompts that don’t need the whole kit-and-kaboodle.

Well, I suppose you could say the same about the DMV. Here’s what I mean. Most DMVs were probably set up without much concern toward allowing multiple paths. The overall design takes a lot more contemplation and building time to provide sensibly shaped forked paths. If you are in a rush to get a DMV underway, you come up with a single path that covers all the bases. Therefore, everyone is covered. Making everyone wait the same is okay because at least you know that nothing will get lost along the way.

Sure, people coming in the door who have trivial or simple requests will need to wait as long as those with the most complicated of requests, but that’s not something you need to worry about upfront. Later, if people start carping about the lack of speediness, okay, you then try to rejigger the process to allow for multiple paths.

The same might be said for when trying to get advanced AI out the door. You are likely more interested in making sure that the byzantine and innovative advanced capabilities work properly, versus whether some prompts ought to get the greased skids.

A twist to that is the idea that you are probably more worried about maximum latencies than you would be about minimums. This stands to reason. Your effort to optimize is going to focus on trying to keep the AI from running endlessly to generate a response. People will only wait so long to get a response, even for highly complex prompts. Put your elbow grease toward the upper bounds versus the lower bounds.

The Tough Call On Categorizing Prompts

An equally tough consideration is exactly how you determine which prompts are suitably deserving of quick responses.

Well, maybe you just count the number of words in the prompt.

A prompt with just one word would seem unlikely to be worthy of the full gauntlet. Let it pass through or maybe skip some steps. This though doesn’t quite bear out. A prompt with a handful of words might be easy-peasy, while another prompt with the same number of words might be a doozy. Keep in mind that prompts consist of everyday natural language, which is semantically ambiguous, and you can open a can of worms with just a scant number of words.

This is not like sorting apples or widgets.

All in all, a prudent categorization in this context cannot do something blindly such as purely relying on the number of words. The meaning of the prompt comes into the big picture. A five-word prompt that requires little computational analysis is likely only discerned as a small chore by determining what the prompt is all about.

Note that this means you indubitably have to do some amount of initial processing to gauge what the prompt constitutes. Once you’ve got that first blush done, you can have the AI flow the prompt through the other elements with a kind of flag that indicates this is a fly-by-night request, i.e., work on it quickly and move it along.

You could also establish a separate line of machinery for the short ones, but that’s probably more costly and not something you can concoct overnight. DMVs often kept the same arrangement inside the customer-facing processing center and merely adjusted by allowing the skipping of windows. Eventually, newer avenues were developed such as the use of automated kiosks.

Time will tell in the case of AI.

There is a wide variety of highly technical techniques underlying prompt-assessment and routing issues, which I will be covering in detail in later postings so keep your eyes peeled. Some of the techniques are:

- (1) Prompt classification and routing

- (2) Multi-tier model architecture

- (3) Dynamic attention mechanisms

- (4) Adaptive token processing

- (5) Caching and pre-built responses

- (6) Heuristic cutoffs for contextual expansion

- (7) Model layer pruning on demand

I realize that seems relatively arcane. Admittedly, it’s one of those inside baseball topics that only heads-down AI researchers and developers are likely to care about. It is a decidedly niche aspect of generative AI and LLMs. In the same breath, we can likely agree that it is an important arena since people aren’t likely to use models that make them wait for simple prompts.

AI makers that seek widespread adoption of their AI wares need to give due consideration to the gauntlet walk problem.

Put On Your Thinking Cap And Get To Work

A few final thoughts before finishing up.

The prompt-assessment task is crucial in an additional fashion. The AI could inadvertently arrive at false positives and false negatives. Here’s what that foretells. Suppose the AI assesses that a prompt is simple and opts to therefore avoid full processing, but then the reality is that the answer produced is insufficient and the AI misclassified the prompt.

Oops, a user gets a shallow answer.

They are irked.

The other side of the coin is not pretty either. Suppose the AI assesses that a prompt should get the full treatment, shampoo and conditioner included, but essentially wastes time and computational resources such that the prompt should have been categorized as simple. Oops, the user waited longer than they should have, plus they paid for computational resources they needn’t have consumed.

Awkward.

Overall, prompt-assessment must strive for the Goldilocks principle. Do not be too cold or too hot. Aim to avoid false positives and false negatives. It is a dicey dilemma and well worth a lot more AI research and development.

My final comment is about the implications associated with striving for artificial general intelligence (AGI). AGI is considered the aspirational goal of all those pursuing advances in AI. The belief is that with hard work we can get AI to be on par with human intelligence, see my in-depth analysis of this at the link here.

How do the prompt-assessment issue and the vaunted gauntlet walk relate to AGI?

Get yourself ready for a mind-bending reason.

AGI Ought To Know Better

Efforts to get modern-day AI to respond appropriately such that simple prompts get quick response times while hefty prompts take time to produce are currently being devised by humans. AI researchers and developers go into the code and make changes. They design and redesign the processing gauntlet. And so on.

It seems that any AGI worth its salt would be able to figure this out on its own.

Do you see what I mean?

An AGI would presumably gauge that there is no need to put a lot of computational mulling toward simple prompts. Most humans would do the same. Humans interacting with fellow humans would discern that waiting a long time to respond is going to be perceived as an unusual cadence when in discourse covering simple matters. Humans would undoubtedly self-adjust, assuming they have the mental capacity to do so.

In short, if we are just a stone’s throw away from attaining AGI, why can’t AI figure this out on its own? The lack of AI being able to self-adjust and self-reflect is perhaps a telltale sign. The said-to-be sign is that our current era of AI is not on the precipice of becoming AGI.

Boom, drop the mic.

Get yourself a glass of fine wine and find a quiet place to reflect on that contentious contention. When digging into it, you’ll need to decide if it is a simple prompt or a hard one, and judge how fast you think you can respond to it. Yes, indeed, humans are generally good at that kind of mental gymnastics.

You may like

Noticias

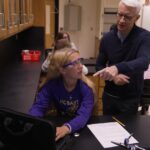

Revivir el compromiso en el aula de español: un desafío musical con chatgpt – enfoque de la facultad

Published

10 meses agoon

6 junio, 2025

A mitad del semestre, no es raro notar un cambio en los niveles de energía de sus alumnos (Baghurst y Kelley, 2013; Kumari et al., 2021). El entusiasmo inicial por aprender un idioma extranjero puede disminuir a medida que otros cursos con tareas exigentes compitan por su atención. Algunos estudiantes priorizan las materias que perciben como más directamente vinculadas a su especialidad o carrera, mientras que otros simplemente sienten el peso del agotamiento de mediados de semestre. En la primavera, los largos meses de invierno pueden aumentar esta fatiga, lo que hace que sea aún más difícil mantener a los estudiantes comprometidos (Rohan y Sigmon, 2000).

Este es el momento en que un instructor de idiomas debe pivotar, cambiando la dinámica del aula para reavivar la curiosidad y la motivación. Aunque los instructores se esfuerzan por incorporar actividades que se adapten a los cinco estilos de aprendizaje preferidos (Felder y Henriques, 1995)-Visual (aprendizaje a través de imágenes y comprensión espacial), auditivo (aprendizaje a través de la escucha y discusión), lectura/escritura (aprendizaje a través de interacción basada en texto), Kinesthetic (aprendizaje a través de movimiento y actividades prácticas) y multimodal (una combinación de múltiples estilos)-its is beneficiales). Estructurado y, después de un tiempo, clases predecibles con actividades que rompen el molde. La introducción de algo inesperado y diferente de la dinámica del aula establecida puede revitalizar a los estudiantes, fomentar la creatividad y mejorar su entusiasmo por el aprendizaje.

La música, en particular, ha sido durante mucho tiempo un aliado de instructores que enseñan un segundo idioma (L2), un idioma aprendido después de la lengua nativa, especialmente desde que el campo hizo la transición hacia un enfoque más comunicativo. Arraigado en la interacción y la aplicación del mundo real, el enfoque comunicativo prioriza el compromiso significativo sobre la memorización de memoria, ayudando a los estudiantes a desarrollar fluidez de formas naturales e inmersivas. La investigación ha destacado constantemente los beneficios de la música en la adquisición de L2, desde mejorar la pronunciación y las habilidades de escucha hasta mejorar la retención de vocabulario y la comprensión cultural (DeGrave, 2019; Kumar et al. 2022; Nuessel y Marshall, 2008; Vidal y Nordgren, 2024).

Sobre la base de esta tradición, la actividad que compartiremos aquí no solo incorpora música sino que también integra inteligencia artificial, agregando una nueva capa de compromiso y pensamiento crítico. Al usar la IA como herramienta en el proceso de aprendizaje, los estudiantes no solo se familiarizan con sus capacidades, sino que también desarrollan la capacidad de evaluar críticamente el contenido que genera. Este enfoque los alienta a reflexionar sobre el lenguaje, el significado y la interpretación mientras participan en el análisis de texto, la escritura creativa, la oratoria y la gamificación, todo dentro de un marco interactivo y culturalmente rico.

Descripción de la actividad: Desafío musical con Chatgpt: “Canta y descubre”

Objetivo:

Los estudiantes mejorarán su comprensión auditiva y su producción escrita en español analizando y recreando letras de canciones con la ayuda de ChatGPT. Si bien las instrucciones se presentan aquí en inglés, la actividad debe realizarse en el idioma de destino, ya sea que se enseñe el español u otro idioma.

Instrucciones:

1. Escuche y decodifique

- Divida la clase en grupos de 2-3 estudiantes.

- Elija una canción en español (por ejemplo, La Llorona por chavela vargas, Oye CÓMO VA por Tito Puente, Vivir mi Vida por Marc Anthony).

- Proporcione a cada grupo una versión incompleta de la letra con palabras faltantes.

- Los estudiantes escuchan la canción y completan los espacios en blanco.

2. Interpretar y discutir

- Dentro de sus grupos, los estudiantes analizan el significado de la canción.

- Discuten lo que creen que transmiten las letras, incluidas las emociones, los temas y cualquier referencia cultural que reconocan.

- Cada grupo comparte su interpretación con la clase.

- ¿Qué crees que la canción está tratando de comunicarse?

- ¿Qué emociones o sentimientos evocan las letras para ti?

- ¿Puedes identificar alguna referencia cultural en la canción? ¿Cómo dan forma a su significado?

- ¿Cómo influye la música (melodía, ritmo, etc.) en su interpretación de la letra?

- Cada grupo comparte su interpretación con la clase.

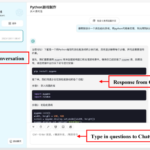

3. Comparar con chatgpt

- Después de formar su propio análisis, los estudiantes preguntan a Chatgpt:

- ¿Qué crees que la canción está tratando de comunicarse?

- ¿Qué emociones o sentimientos evocan las letras para ti?

- Comparan la interpretación de ChatGPT con sus propias ideas y discuten similitudes o diferencias.

4. Crea tu propio verso

- Cada grupo escribe un nuevo verso que coincide con el estilo y el ritmo de la canción.

- Pueden pedirle ayuda a ChatGPT: “Ayúdanos a escribir un nuevo verso para esta canción con el mismo estilo”.

5. Realizar y cantar

- Cada grupo presenta su nuevo verso a la clase.

- Si se sienten cómodos, pueden cantarlo usando la melodía original.

- Es beneficioso que el profesor tenga una versión de karaoke (instrumental) de la canción disponible para que las letras de los estudiantes se puedan escuchar claramente.

- Mostrar las nuevas letras en un monitor o proyector permite que otros estudiantes sigan y canten juntos, mejorando la experiencia colectiva.

6. Elección – El Grammy va a

Los estudiantes votan por diferentes categorías, incluyendo:

- Mejor adaptación

- Mejor reflexión

- Mejor rendimiento

- Mejor actitud

- Mejor colaboración

7. Reflexión final

- ¿Cuál fue la parte más desafiante de comprender la letra?

- ¿Cómo ayudó ChatGPT a interpretar la canción?

- ¿Qué nuevas palabras o expresiones aprendiste?

Pensamientos finales: música, IA y pensamiento crítico

Un desafío musical con Chatgpt: “Canta y descubre” (Desafío Musical Con Chatgpt: “Cantar y Descubrir”) es una actividad que he encontrado que es especialmente efectiva en mis cursos intermedios y avanzados. Lo uso cuando los estudiantes se sienten abrumados o distraídos, a menudo alrededor de los exámenes parciales, como una forma de ayudarlos a relajarse y reconectarse con el material. Sirve como un descanso refrescante, lo que permite a los estudiantes alejarse del estrés de las tareas y reenfocarse de una manera divertida e interactiva. Al incorporar música, creatividad y tecnología, mantenemos a los estudiantes presentes en la clase, incluso cuando todo lo demás parece exigir su atención.

Más allá de ofrecer una pausa bien merecida, esta actividad provoca discusiones atractivas sobre la interpretación del lenguaje, el contexto cultural y el papel de la IA en la educación. A medida que los estudiantes comparan sus propias interpretaciones de las letras de las canciones con las generadas por ChatGPT, comienzan a reconocer tanto el valor como las limitaciones de la IA. Estas ideas fomentan el pensamiento crítico, ayudándoles a desarrollar un enfoque más maduro de la tecnología y su impacto en su aprendizaje.

Agregar el elemento de karaoke mejora aún más la experiencia, dando a los estudiantes la oportunidad de realizar sus nuevos versos y divertirse mientras practica sus habilidades lingüísticas. Mostrar la letra en una pantalla hace que la actividad sea más inclusiva, lo que permite a todos seguirlo. Para hacerlo aún más agradable, seleccionando canciones que resuenen con los gustos de los estudiantes, ya sea un clásico como La Llorona O un éxito contemporáneo de artistas como Bad Bunny, Selena, Daddy Yankee o Karol G, hace que la actividad se sienta más personal y atractiva.

Esta actividad no se limita solo al aula. Es una gran adición a los clubes españoles o eventos especiales, donde los estudiantes pueden unirse a un amor compartido por la música mientras practican sus habilidades lingüísticas. Después de todo, ¿quién no disfruta de una buena parodia de su canción favorita?

Mezclar el aprendizaje de idiomas con música y tecnología, Desafío Musical Con Chatgpt Crea un entorno dinámico e interactivo que revitaliza a los estudiantes y profundiza su conexión con el lenguaje y el papel evolutivo de la IA. Convierte los momentos de agotamiento en oportunidades de creatividad, exploración cultural y entusiasmo renovado por el aprendizaje.

Angela Rodríguez Mooney, PhD, es profesora asistente de español y la Universidad de Mujeres de Texas.

Referencias

Baghurst, Timothy y Betty C. Kelley. “Un examen del estrés en los estudiantes universitarios en el transcurso de un semestre”. Práctica de promoción de la salud 15, no. 3 (2014): 438-447.

DeGrave, Pauline. “Música en el aula de idiomas extranjeros: cómo y por qué”. Revista de Enseñanza e Investigación de Lenguas 10, no. 3 (2019): 412-420.

Felder, Richard M. y Eunice R. Henriques. “Estilos de aprendizaje y enseñanza en la educación extranjera y de segundo idioma”. Anales de idiomas extranjeros 28, no. 1 (1995): 21-31.

Nuessel, Frank y April D. Marshall. “Prácticas y principios para involucrar a los tres modos comunicativos en español a través de canciones y música”. Hispania (2008): 139-146.

Kumar, Tribhuwan, Shamim Akhter, Mehrunnisa M. Yunus y Atefeh Shamsy. “Uso de la música y las canciones como herramientas pedagógicas en la enseñanza del inglés como contextos de idiomas extranjeros”. Education Research International 2022, no. 1 (2022): 1-9

Noticias

5 indicaciones de chatgpt que pueden ayudar a los adolescentes a lanzar una startup

Published

10 meses agoon

5 junio, 2025

Teen emprendedor que usa chatgpt para ayudarlo con su negocio

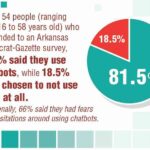

El emprendimiento adolescente sigue en aumento. Según Junior Achievement Research, el 66% de los adolescentes estadounidenses de entre 13 y 17 años dicen que es probable que considere comenzar un negocio como adultos, con el monitor de emprendimiento global 2023-2024 que encuentra que el 24% de los jóvenes de 18 a 24 años son actualmente empresarios. Estos jóvenes fundadores no son solo soñando, están construyendo empresas reales que generan ingresos y crean un impacto social, y están utilizando las indicaciones de ChatGPT para ayudarlos.

En Wit (lo que sea necesario), la organización que fundó en 2009, hemos trabajado con más de 10,000 jóvenes empresarios. Durante el año pasado, he observado un cambio en cómo los adolescentes abordan la planificación comercial. Con nuestra orientación, están utilizando herramientas de IA como ChatGPT, no como atajos, sino como socios de pensamiento estratégico para aclarar ideas, probar conceptos y acelerar la ejecución.

Los emprendedores adolescentes más exitosos han descubierto indicaciones específicas que los ayudan a pasar de una idea a otra. Estas no son sesiones genéricas de lluvia de ideas: están utilizando preguntas específicas que abordan los desafíos únicos que enfrentan los jóvenes fundadores: recursos limitados, compromisos escolares y la necesidad de demostrar sus conceptos rápidamente.

Aquí hay cinco indicaciones de ChatGPT que ayudan constantemente a los emprendedores adolescentes a construir negocios que importan.

1. El problema del primer descubrimiento chatgpt aviso

“Me doy cuenta de que [specific group of people]

luchar contra [specific problem I’ve observed]. Ayúdame a entender mejor este problema explicando: 1) por qué existe este problema, 2) qué soluciones existen actualmente y por qué son insuficientes, 3) cuánto las personas podrían pagar para resolver esto, y 4) tres formas específicas en que podría probar si este es un problema real que vale la pena resolver “.

Un adolescente podría usar este aviso después de notar que los estudiantes en la escuela luchan por pagar el almuerzo. En lugar de asumir que entienden el alcance completo, podrían pedirle a ChatGPT que investigue la deuda del almuerzo escolar como un problema sistémico. Esta investigación puede llevarlos a crear un negocio basado en productos donde los ingresos ayuden a pagar la deuda del almuerzo, lo que combina ganancias con el propósito.

Los adolescentes notan problemas de manera diferente a los adultos porque experimentan frustraciones únicas, desde los desafíos de las organizaciones escolares hasta las redes sociales hasta las preocupaciones ambientales. Según la investigación de Square sobre empresarios de la Generación de la Generación Z, el 84% planea ser dueños de negocios dentro de cinco años, lo que los convierte en candidatos ideales para las empresas de resolución de problemas.

2. El aviso de chatgpt de chatgpt de chatgpt de realidad de la realidad del recurso

“Soy [age] años con aproximadamente [dollar amount] invertir y [number] Horas por semana disponibles entre la escuela y otros compromisos. Según estas limitaciones, ¿cuáles son tres modelos de negocio que podría lanzar de manera realista este verano? Para cada opción, incluya costos de inicio, requisitos de tiempo y los primeros tres pasos para comenzar “.

Este aviso se dirige al elefante en la sala: la mayoría de los empresarios adolescentes tienen dinero y tiempo limitados. Cuando un empresario de 16 años emplea este enfoque para evaluar un concepto de negocio de tarjetas de felicitación, puede descubrir que pueden comenzar con $ 200 y escalar gradualmente. Al ser realistas sobre las limitaciones por adelantado, evitan el exceso de compromiso y pueden construir hacia objetivos de ingresos sostenibles.

Según el informe de Gen Z de Square, el 45% de los jóvenes empresarios usan sus ahorros para iniciar negocios, con el 80% de lanzamiento en línea o con un componente móvil. Estos datos respaldan la efectividad de la planificación basada en restricciones: cuando funcionan los adolescentes dentro de las limitaciones realistas, crean modelos comerciales más sostenibles.

3. El aviso de chatgpt del simulador de voz del cliente

“Actúa como un [specific demographic] Y dame comentarios honestos sobre esta idea de negocio: [describe your concept]. ¿Qué te excitaría de esto? ¿Qué preocupaciones tendrías? ¿Cuánto pagarías de manera realista? ¿Qué necesitaría cambiar para que se convierta en un cliente? “

Los empresarios adolescentes a menudo luchan con la investigación de los clientes porque no pueden encuestar fácilmente a grandes grupos o contratar firmas de investigación de mercado. Este aviso ayuda a simular los comentarios de los clientes haciendo que ChatGPT adopte personas específicas.

Un adolescente que desarrolla un podcast para atletas adolescentes podría usar este enfoque pidiéndole a ChatGPT que responda a diferentes tipos de atletas adolescentes. Esto ayuda a identificar temas de contenido que resuenan y mensajes que se sienten auténticos para el público objetivo.

El aviso funciona mejor cuando se vuelve específico sobre la demografía, los puntos débiles y los contextos. “Actúa como un estudiante de último año de secundaria que solicita a la universidad” produce mejores ideas que “actuar como un adolescente”.

4. El mensaje mínimo de diseñador de prueba viable chatgpt

“Quiero probar esta idea de negocio: [describe concept] sin gastar más de [budget amount] o más de [time commitment]. Diseñe tres experimentos simples que podría ejecutar esta semana para validar la demanda de los clientes. Para cada prueba, explique lo que aprendería, cómo medir el éxito y qué resultados indicarían que debería avanzar “.

Este aviso ayuda a los adolescentes a adoptar la metodología Lean Startup sin perderse en la jerga comercial. El enfoque en “This Week” crea urgencia y evita la planificación interminable sin acción.

Un adolescente que desea probar un concepto de línea de ropa podría usar este indicador para diseñar experimentos de validación simples, como publicar maquetas de diseño en las redes sociales para evaluar el interés, crear un formulario de Google para recolectar pedidos anticipados y pedirles a los amigos que compartan el concepto con sus redes. Estas pruebas no cuestan nada más que proporcionar datos cruciales sobre la demanda y los precios.

5. El aviso de chatgpt del generador de claridad de tono

“Convierta esta idea de negocio en una clara explicación de 60 segundos: [describe your business]. La explicación debe incluir: el problema que resuelve, su solución, a quién ayuda, por qué lo elegirían sobre las alternativas y cómo se ve el éxito. Escríbelo en lenguaje de conversación que un adolescente realmente usaría “.

La comunicación clara separa a los empresarios exitosos de aquellos con buenas ideas pero una ejecución deficiente. Este aviso ayuda a los adolescentes a destilar conceptos complejos a explicaciones convincentes que pueden usar en todas partes, desde las publicaciones en las redes sociales hasta las conversaciones con posibles mentores.

El énfasis en el “lenguaje de conversación que un adolescente realmente usaría” es importante. Muchas plantillas de lanzamiento comercial suenan artificiales cuando se entregan jóvenes fundadores. La autenticidad es más importante que la jerga corporativa.

Más allá de las indicaciones de chatgpt: estrategia de implementación

La diferencia entre los adolescentes que usan estas indicaciones de manera efectiva y aquellos que no se reducen a seguir. ChatGPT proporciona dirección, pero la acción crea resultados.

Los jóvenes empresarios más exitosos con los que trabajo usan estas indicaciones como puntos de partida, no de punto final. Toman las sugerencias generadas por IA e inmediatamente las prueban en el mundo real. Llaman a clientes potenciales, crean prototipos simples e iteran en función de los comentarios reales.

Investigaciones recientes de Junior Achievement muestran que el 69% de los adolescentes tienen ideas de negocios, pero se sienten inciertos sobre el proceso de partida, con el miedo a que el fracaso sea la principal preocupación para el 67% de los posibles empresarios adolescentes. Estas indicaciones abordan esa incertidumbre al desactivar los conceptos abstractos en los próximos pasos concretos.

La imagen más grande

Los emprendedores adolescentes que utilizan herramientas de IA como ChatGPT representan un cambio en cómo está ocurriendo la educación empresarial. Según la investigación mundial de monitores empresariales, los jóvenes empresarios tienen 1,6 veces más probabilidades que los adultos de querer comenzar un negocio, y son particularmente activos en la tecnología, la alimentación y las bebidas, la moda y los sectores de entretenimiento. En lugar de esperar clases de emprendimiento formales o programas de MBA, estos jóvenes fundadores están accediendo a herramientas de pensamiento estratégico de inmediato.

Esta tendencia se alinea con cambios más amplios en la educación y la fuerza laboral. El Foro Económico Mundial identifica la creatividad, el pensamiento crítico y la resiliencia como las principales habilidades para 2025, la capacidad de las capacidades que el espíritu empresarial desarrolla naturalmente.

Programas como WIT brindan soporte estructurado para este viaje, pero las herramientas en sí mismas se están volviendo cada vez más accesibles. Un adolescente con acceso a Internet ahora puede acceder a recursos de planificación empresarial que anteriormente estaban disponibles solo para empresarios establecidos con presupuestos significativos.

La clave es usar estas herramientas cuidadosamente. ChatGPT puede acelerar el pensamiento y proporcionar marcos, pero no puede reemplazar el arduo trabajo de construir relaciones, crear productos y servir a los clientes. La mejor idea de negocio no es la más original, es la que resuelve un problema real para personas reales. Las herramientas de IA pueden ayudar a identificar esas oportunidades, pero solo la acción puede convertirlos en empresas que importan.

Noticias

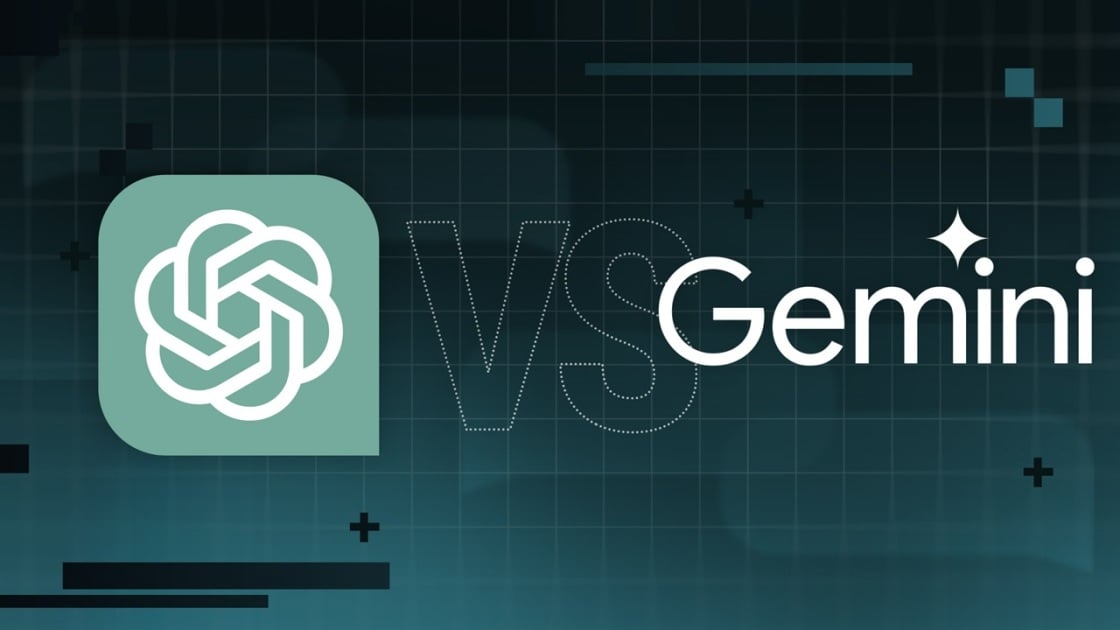

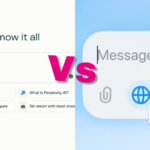

Chatgpt vs. gemini: he probado ambos, y uno definitivamente es mejor

Published

10 meses agoon

5 junio, 2025

Precio

ChatGPT y Gemini tienen versiones gratuitas que limitan su acceso a características y modelos. Los planes premium para ambos también comienzan en alrededor de $ 20 por mes. Las características de chatbot, como investigaciones profundas, generación de imágenes y videos, búsqueda web y más, son similares en ChatGPT y Gemini. Sin embargo, los planes de Gemini pagados también incluyen el almacenamiento en la nube de Google Drive (a partir de 2TB) y un conjunto robusto de integraciones en las aplicaciones de Google Workspace.

Los niveles de más alta gama de ChatGPT y Gemini desbloquean el aumento de los límites de uso y algunas características únicas, pero el costo mensual prohibitivo de estos planes (como $ 200 para Chatgpt Pro o $ 250 para Gemini Ai Ultra) los pone fuera del alcance de la mayoría de las personas. Las características específicas del plan Pro de ChatGPT, como el modo O1 Pro que aprovecha el poder de cálculo adicional para preguntas particularmente complicadas, no son especialmente relevantes para el consumidor promedio, por lo que no sentirá que se está perdiendo. Sin embargo, es probable que desee las características que son exclusivas del plan Ai Ultra de Gemini, como la generación de videos VEO 3.

Ganador: Géminis

Plataformas

Puede acceder a ChatGPT y Gemini en la web o a través de aplicaciones móviles (Android e iOS). ChatGPT también tiene aplicaciones de escritorio (macOS y Windows) y una extensión oficial para Google Chrome. Gemini no tiene aplicaciones de escritorio dedicadas o una extensión de Chrome, aunque se integra directamente con el navegador.

(Crédito: OpenAI/PCMAG)

Chatgpt está disponible en otros lugares, Como a través de Siri. Como se mencionó, puede acceder a Gemini en las aplicaciones de Google, como el calendario, Documento, ConducirGmail, Mapas, Mantener, FotosSábanas, y Música de YouTube. Tanto los modelos de Chatgpt como Gemini también aparecen en sitios como la perplejidad. Sin embargo, obtiene la mayor cantidad de funciones de estos chatbots en sus aplicaciones y portales web dedicados.

Las interfaces de ambos chatbots son en gran medida consistentes en todas las plataformas. Son fáciles de usar y no lo abruman con opciones y alternar. ChatGPT tiene algunas configuraciones más para jugar, como la capacidad de ajustar su personalidad, mientras que la profunda interfaz de investigación de Gemini hace un mejor uso de los bienes inmuebles de pantalla.

Ganador: empate

Modelos de IA

ChatGPT tiene dos series primarias de modelos, la serie 4 (su línea de conversación, insignia) y la Serie O (su compleja línea de razonamiento). Gemini ofrece de manera similar una serie Flash de uso general y una serie Pro para tareas más complicadas.

Los últimos modelos de Chatgpt son O3 y O4-Mini, y los últimos de Gemini son 2.5 Flash y 2.5 Pro. Fuera de la codificación o la resolución de una ecuación, pasará la mayor parte de su tiempo usando los modelos de la serie 4-Series y Flash. A continuación, puede ver cómo funcionan estos modelos en una variedad de tareas. Qué modelo es mejor depende realmente de lo que quieras hacer.

Ganador: empate

Búsqueda web

ChatGPT y Gemini pueden buscar información actualizada en la web con facilidad. Sin embargo, ChatGPT presenta mosaicos de artículos en la parte inferior de sus respuestas para una lectura adicional, tiene un excelente abastecimiento que facilita la vinculación de reclamos con evidencia, incluye imágenes en las respuestas cuando es relevante y, a menudo, proporciona más detalles en respuesta. Gemini no muestra nombres de fuente y títulos de artículos completos, e incluye mosaicos e imágenes de artículos solo cuando usa el modo AI de Google. El abastecimiento en este modo es aún menos robusto; Google relega las fuentes a los caretes que se pueden hacer clic que no resaltan las partes relevantes de su respuesta.

Como parte de sus experiencias de búsqueda en la web, ChatGPT y Gemini pueden ayudarlo a comprar. Si solicita consejos de compra, ambos presentan mosaicos haciendo clic en enlaces a los minoristas. Sin embargo, Gemini generalmente sugiere mejores productos y tiene una característica única en la que puede cargar una imagen tuya para probar digitalmente la ropa antes de comprar.

Ganador: chatgpt

Investigación profunda

ChatGPT y Gemini pueden generar informes que tienen docenas de páginas e incluyen más de 50 fuentes sobre cualquier tema. La mayor diferencia entre los dos se reduce al abastecimiento. Gemini a menudo cita más fuentes que CHATGPT, pero maneja el abastecimiento en informes de investigación profunda de la misma manera que lo hace en la búsqueda en modo AI, lo que significa caretas que se puede hacer clic sin destacados en el texto. Debido a que es más difícil conectar las afirmaciones en los informes de Géminis a fuentes reales, es más difícil creerles. El abastecimiento claro de ChatGPT con destacados en el texto es más fácil de confiar. Sin embargo, Gemini tiene algunas características de calidad de vida en ChatGPT, como la capacidad de exportar informes formateados correctamente a Google Docs con un solo clic. Su tono también es diferente. Los informes de ChatGPT se leen como publicaciones de foro elaboradas, mientras que los informes de Gemini se leen como documentos académicos.

Ganador: chatgpt

Generación de imágenes

La generación de imágenes de ChatGPT impresiona independientemente de lo que solicite, incluso las indicaciones complejas para paneles o diagramas cómicos. No es perfecto, pero los errores y la distorsión son mínimos. Gemini genera imágenes visualmente atractivas más rápido que ChatGPT, pero rutinariamente incluyen errores y distorsión notables. Con indicaciones complicadas, especialmente diagramas, Gemini produjo resultados sin sentido en las pruebas.

Arriba, puede ver cómo ChatGPT (primera diapositiva) y Géminis (segunda diapositiva) les fue con el siguiente mensaje: “Genere una imagen de un estudio de moda con una decoración simple y rústica que contrasta con el espacio más agradable. Incluya un sofá marrón y paredes de ladrillo”. La imagen de ChatGPT limita los problemas al detalle fino en las hojas de sus plantas y texto en su libro, mientras que la imagen de Gemini muestra problemas más notables en su tubo de cordón y lámpara.

Ganador: chatgpt

¡Obtenga nuestras mejores historias!

Toda la última tecnología, probada por nuestros expertos

Regístrese en el boletín de informes de laboratorio para recibir las últimas revisiones de productos de PCMAG, comprar asesoramiento e ideas.

Al hacer clic en Registrarme, confirma que tiene más de 16 años y acepta nuestros Términos de uso y Política de privacidad.

¡Gracias por registrarse!

Su suscripción ha sido confirmada. ¡Esté atento a su bandeja de entrada!

Generación de videos

La generación de videos de Gemini es la mejor de su clase, especialmente porque ChatGPT no puede igualar su capacidad para producir audio acompañante. Actualmente, Google bloquea el último modelo de generación de videos de Gemini, VEO 3, detrás del costoso plan AI Ultra, pero obtienes más videos realistas que con ChatGPT. Gemini también tiene otras características que ChatGPT no, como la herramienta Flow Filmmaker, que le permite extender los clips generados y el animador AI Whisk, que le permite animar imágenes fijas. Sin embargo, tenga en cuenta que incluso con VEO 3, aún necesita generar videos varias veces para obtener un gran resultado.

En el ejemplo anterior, solicité a ChatGPT y Gemini a mostrarme un solucionador de cubos de Rubik Rubik que resuelva un cubo. La persona en el video de Géminis se ve muy bien, y el audio acompañante es competente. Al final, hay una buena atención al detalle con el marco que se desplaza, simulando la detención de una grabación de selfies. Mientras tanto, Chatgpt luchó con su cubo, distorsionándolo en gran medida.

Ganador: Géminis

Procesamiento de archivos

Comprender los archivos es una fortaleza de ChatGPT y Gemini. Ya sea que desee que respondan preguntas sobre un manual, editen un currículum o le informen algo sobre una imagen, ninguno decepciona. Sin embargo, ChatGPT tiene la ventaja sobre Gemini, ya que ofrece un reconocimiento de imagen ligeramente mejor y respuestas más detalladas cuando pregunta sobre los archivos cargados. Ambos chatbots todavía a veces inventan citas de documentos proporcionados o malinterpretan las imágenes, así que asegúrese de verificar sus resultados.

Ganador: chatgpt

Escritura creativa

Chatgpt y Gemini pueden generar poemas, obras, historias y más competentes. CHATGPT, sin embargo, se destaca entre los dos debido a cuán únicas son sus respuestas y qué tan bien responde a las indicaciones. Las respuestas de Gemini pueden sentirse repetitivas si no calibra cuidadosamente sus solicitudes, y no siempre sigue todas las instrucciones a la carta.

En el ejemplo anterior, solicité ChatGPT (primera diapositiva) y Gemini (segunda diapositiva) con lo siguiente: “Sin hacer referencia a nada en su memoria o respuestas anteriores, quiero que me escriba un poema de verso gratuito. Preste atención especial a la capitalización, enjambment, ruptura de línea y puntuación. Dado que es un verso libre, no quiero un medidor familiar o un esquema de retiro de la rima, pero quiero que tenga un estilo de coohes. ChatGPT logró entregar lo que pedí en el aviso, y eso era distinto de las generaciones anteriores. Gemini tuvo problemas para generar un poema que incorporó cualquier cosa más allá de las comas y los períodos, y su poema anterior se lee de manera muy similar a un poema que generó antes.

Recomendado por nuestros editores

Ganador: chatgpt

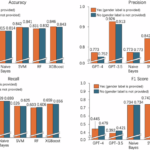

Razonamiento complejo

Los modelos de razonamiento complejos de Chatgpt y Gemini pueden manejar preguntas de informática, matemáticas y física con facilidad, así como mostrar de manera competente su trabajo. En las pruebas, ChatGPT dio respuestas correctas un poco más a menudo que Gemini, pero su rendimiento es bastante similar. Ambos chatbots pueden y le darán respuestas incorrectas, por lo que verificar su trabajo aún es vital si está haciendo algo importante o tratando de aprender un concepto.

Ganador: chatgpt

Integración

ChatGPT no tiene integraciones significativas, mientras que las integraciones de Gemini son una característica definitoria. Ya sea que desee obtener ayuda para editar un ensayo en Google Docs, comparta una pestaña Chrome para hacer una pregunta, pruebe una nueva lista de reproducción de música de YouTube personalizada para su gusto o desbloquee ideas personales en Gmail, Gemini puede hacer todo y mucho más. Es difícil subestimar cuán integrales y poderosas son realmente las integraciones de Géminis.

Ganador: Géminis

Asistentes de IA

ChatGPT tiene GPT personalizados, y Gemini tiene gemas. Ambos son asistentes de IA personalizables. Tampoco es una gran actualización sobre hablar directamente con los chatbots, pero los GPT personalizados de terceros agregan una nueva funcionalidad, como el fácil acceso a Canva para editar imágenes generadas. Mientras tanto, terceros no pueden crear gemas, y no puedes compartirlas. Puede permitir que los GPT personalizados accedan a la información externa o tomen acciones externas, pero las GEM no tienen una funcionalidad similar.

Ganador: chatgpt

Contexto Windows y límites de uso

La ventana de contexto de ChatGPT sube a 128,000 tokens en sus planes de nivel superior, y todos los planes tienen límites de uso dinámicos basados en la carga del servidor. Géminis, por otro lado, tiene una ventana de contexto de 1,000,000 token. Google no está demasiado claro en los límites de uso exactos para Gemini, pero también son dinámicos dependiendo de la carga del servidor. Anecdóticamente, no pude alcanzar los límites de uso usando los planes pagados de Chatgpt o Gemini, pero es mucho más fácil hacerlo con los planes gratuitos.

Ganador: Géminis

Privacidad

La privacidad en Chatgpt y Gemini es una bolsa mixta. Ambos recopilan cantidades significativas de datos, incluidos todos sus chats, y usan esos datos para capacitar a sus modelos de IA de forma predeterminada. Sin embargo, ambos le dan la opción de apagar el entrenamiento. Google al menos no recopila y usa datos de Gemini para fines de capacitación en aplicaciones de espacio de trabajo, como Gmail, de forma predeterminada. ChatGPT y Gemini también prometen no vender sus datos o usarlos para la orientación de anuncios, pero Google y OpenAI tienen historias sórdidas cuando se trata de hacks, filtraciones y diversos fechorías digitales, por lo que recomiendo no compartir nada demasiado sensible.

Ganador: empate

Related posts

Trending

-

Startups2 años ago

Startups2 años agoRemove.bg: La Revolución en la Edición de Imágenes que Debes Conocer

-

Tutoriales2 años ago

Tutoriales2 años agoCómo Comenzar a Utilizar ChatGPT: Una Guía Completa para Principiantes

-

Startups2 años ago

Startups2 años agoDeepgram: Revolucionando el Reconocimiento de Voz con IA

-

Startups2 años ago

Startups2 años agoStartups de IA en EE.UU. que han recaudado más de $100M en 2024

-

Recursos2 años ago

Recursos2 años agoCómo Empezar con Popai.pro: Tu Espacio Personal de IA – Guía Completa, Instalación, Versiones y Precios

-

Recursos2 años ago

Recursos2 años agoPerplexity aplicado al Marketing Digital y Estrategias SEO

-

Estudiar IA2 años ago

Estudiar IA2 años agoCurso de Inteligencia Artificial Aplicada de 4Geeks Academy 2024

-

Noticias2 años ago

Noticias2 años agoDos periodistas octogenarios deman a ChatGPT por robar su trabajo